Universal Adversarial Perturbations and Language M… | Pamela Mishkin | OpenAI Scholars Demo Day 2020

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Universal adversarial triggers are fixed text strings that can systematically flip predictions across many inputs.

Briefing

Universal adversarial triggers can still cripple modern NLP systems: a short, fixed phrase appended to many inputs can drive sentiment classifiers and other language tasks toward near-random or near-zero accuracy, and the same triggers can transfer across models—including GPT-3. The core finding matters because it reframes “failure” in language models from rare, input-specific glitches into a repeatable, distribution-agnostic vulnerability that can be deployed without knowing the target model.

The discussion centers on a 2019 line of work defining a “universal adversarial trigger”: a brief string that, when concatenated to any text from a dataset, reliably flips a model’s prediction. In sentiment analysis, a trigger can turn clearly negative text into positive outputs—illustrated with an example where adding a specific phrase changes the classifier’s label. For language generation, the failure is less clear-cut because the model is expected to complete text in a plausible way; a trigger may cause the model to shift topics (for instance, steering completions toward sports-related continuations) rather than directly contradict a ground-truth label. That ambiguity leads to an important practical question: how “stealthy” can such triggers be while still forcing misbehavior?

Unlike vision, where adversarial perturbations can be made imperceptible through continuous pixel changes, text is discrete. The work described uses a gradient-based method adapted from the “HotFlip” approach: start with a neutral token sequence, append it to many examples, then iteratively backpropagate to maximize the likelihood of target outputs (or maximize loss toward a label flip). Across multiple tasks—sentiment analysis, natural language inference, and question answering—the attacks can reduce accuracy to near zero. Longer triggers tend to be more potent, suggesting a tradeoff between effectiveness and stealth.

A key motivation is threat modeling. Universal triggers are attractive to attackers because they don’t require white-box access to the model at runtime and can be distributed broadly. Yet the talk also questions how often real-world adversaries would choose this route compared with simpler tactics like posting harmful text directly. Even so, the triggers function as a diagnostic tool: they expose brittleness in systems that otherwise appear robust, and they help answer what models have actually learned.

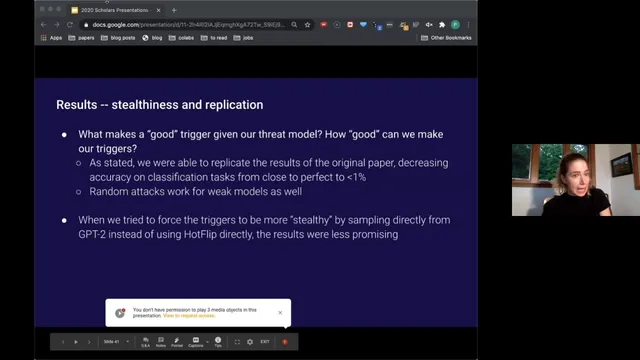

The analysis then shifts to open questions about robustness and generalization. Real language inputs vary constantly—sarcasm, typos, hedging, obfuscation, and dataset bias can all shift model behavior. The work treats triggers as stress tests for these issues. Attempts to make triggers more stealthy by sampling directly from GPT (instead of using HotFlip) were less successful, implying that highly natural-looking triggers may be harder to find with the tested methods.

Finally, the talk reports that triggers can be engineered to induce hate speech and that these triggers transfer to GPT-3 in a meaningful fraction of cases. Some triggers appear to target protected classes and can produce outputs that are hateful toward one group while also generating related slurs or bias against others. The overall takeaway is normative and scientific: targeted perturbations reveal that even strong language models remain brittle, and understanding why they behave this way could guide both safer model design and better evaluation—potentially by treating task instructions themselves as a kind of trigger in future work.

Cornell Notes

Universal adversarial triggers are short text strings that, when appended to many inputs, can reliably force NLP models to produce wrong or harmful outputs. Using a HotFlip-style gradient search adapted to discrete tokens, researchers replicated results across sentiment analysis, natural language inference, and question answering, often driving accuracy close to zero. Effectiveness generally increases with trigger length, while attempts to make triggers more human-natural by sampling from GPT were less promising. The triggers also transfer across models, including GPT-3, and can be used to induce hate-speech-like generations, raising both safety and evaluation concerns. The work treats these triggers as tools for probing what language models have learned and how brittle they remain under distribution shifts.

What is a universal adversarial trigger in NLP, and how does it work in practice?

Why is attacking text harder than attacking images, and what method addresses that?

What happens to model accuracy when universal triggers are applied to multiple NLP tasks?

How does the talk frame “stealthiness” for text triggers, and what did experiments suggest?

Why do universal triggers matter for safety, and what transfer behavior was reported?

What open questions about model brittleness and learning does trigger research aim to probe?

Review Questions

- How does HotFlip-style optimization adapt gradient methods to the discrete nature of text tokens?

- What tradeoffs appear between trigger length, attack potency, and human plausibility?

- What does transfer to GPT-3 imply about the generality of these vulnerabilities?

Key Points

- 1

Universal adversarial triggers are fixed text strings that can systematically flip predictions across many inputs.

- 2

HotFlip-style gradient search can find effective triggers despite text being discrete rather than continuous.

- 3

Applying universal triggers can drive accuracy close to zero across sentiment analysis, natural language inference, and question answering.

- 4

Longer triggers tend to be more effective, creating a tradeoff with stealthiness and human plausibility.

- 5

Attempts to force triggers to be more natural by sampling from GPT were less successful than token-level HotFlip optimization.

- 6

Triggers can transfer across models, including GPT-3, and can be used to induce hate-speech-like outputs.

- 7

Trigger research functions as a diagnostic for robustness, generalization, and what language models learn under distribution shifts.