Vercel put out a model (it's really good at frontend)

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

V0/Vzero is framed as a frontend-first coding model that produces consistently strong, responsive UI output and is usable via an API.

Briefing

Vercel’s new coding model, V0 (also referred to as “Vzero” in the discussion), is positioned as a frontend-first AI brain that consistently produces notably better-looking user interfaces than competing coding models—then makes that capability usable through an API. The core significance is practical: V0 isn’t just another general-purpose text generator. It’s tuned for modern web application development, with framework-aware behavior (especially around Next.js and Vercel-style stacks) and features aimed at the workflow developers actually use—streaming output, inline edits, and automated fixes.

The transcript emphasizes that V0’s visual quality likely comes from Vercel’s advantage in data and feedback loops. Vercel has access to large volumes of code input/output pairs scraped from public source, plus interaction signals from users—thumbs up, thumbs down, retries, and related evaluation behavior. That feedback can be used to refine model behavior so the outputs match what developers consider “good,” not merely what looks plausible. The result, according to the testing described, is UI generation that handles responsiveness better than other models and produces substantially longer, more complete layouts.

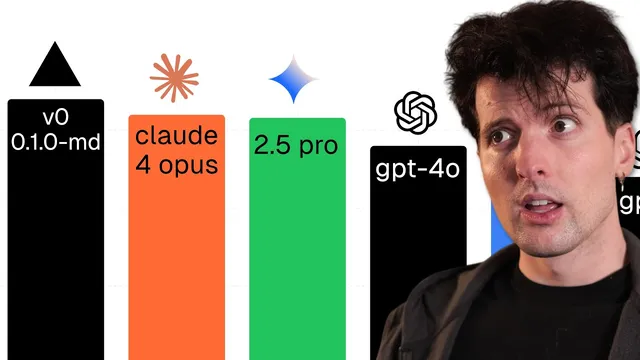

A key detail is how V0 was released and why timing matters. The model drop happened late on the 21st at roughly 8:00 p.m. Pacific time, and the discussion links that timing to the same day’s release of Claude 4. Releasing before or alongside a major competitor matters because it determines whether developers notice the new option. Even with the late timing, the model still drew attention.

On capability, V0 is described as designed for building modern web applications. It supports text and image inputs, streams responses quickly, and is compatible with the OpenAI chat completions API format—an integration detail that lowers friction for teams already using OpenAI-style tooling. The transcript also highlights “framework aware completions,” plus Autofix (identifying and correcting common coding issues during generation) and Quick Edit (streaming inline edits rather than forcing full-file rewrites). Multimodal is mentioned, with uncertainty about whether it can generate images directly or only return image data in encoded form.

Beyond raw output quality, the transcript frames Vercel’s motivation as strategic. General coding models can be broad but often lack “taste,” while curated, feedback-driven refinement can improve UI aesthetics and developer satisfaction. Vercel also has incentives tied to its own product ecosystem—server components, HTTP streaming via server-sent events, and the AI SDK—areas where general models may hallucinate or mishandle newer patterns. By training and refining V0 with Vercel-specific workflows and user feedback, Vercel can make the model more reliable for its stack.

Finally, the discussion argues this is a hedge against platform lock-in. If V0’s model quality becomes strong enough, developers may rely on it inside editors and tools (like Cursor) rather than depending on a single Vercel product experience. The transcript also places the model in a broader competitive spectrum: model quality matters, but system prompts and tool-calling are increasingly commoditized and fiercely contested. Vercel’s bet is to win across the spectrum—especially where it can leverage proprietary feedback data—while acknowledging a major downside: the price is described as expensive, and the model may be better suited to editor workflows than chat-style assistants.

Cornell Notes

Vercel’s V0/Vzero is presented as a frontend-focused coding model that reliably generates high-quality, responsive UI code and supports modern web workflows via an API. The transcript attributes much of the aesthetic and practical improvement to Vercel’s data advantage: large code input/output corpora plus user feedback signals like thumbs up/down, retries, and evaluation metrics. V0 is described as compatible with the OpenAI chat completions API format and built for framework-aware development (notably Next.js/Vercel-style stacks), with features such as streaming responses, Autofix for common issues, and Quick Edit for inline diffs. The release timing is linked to Claude 4’s launch, and the discussion frames Vercel’s move as both a quality play and a hedge against relying on third-party model providers. The main tradeoff raised is cost, with the model likely requiring premium usage or bring-your-own-key integration.

What makes V0/Vzero stand out compared with other coding models in the transcript’s testing?

Why does the transcript think Vercel’s feedback data matters for model quality?

What integration and workflow features are highlighted for V0?

How does the transcript connect V0’s training focus to Vercel’s own product ecosystem?

What does the transcript mean by “system prompts are commoditized” and why does that shift the competitive landscape?

What tradeoff is repeatedly raised about adopting V0?

Review Questions

- Which specific features (streaming, Autofix, Quick Edit, API compatibility) are described as most relevant to real developer workflows, and how do they differ from plain text generation?

- How does user feedback (thumbs up/down, retries) function as a training signal in the transcript’s explanation of why V0’s UI quality improves?

- Why does the transcript argue that tool-calling competition is intensifying, and how does that change what “winning” means for model providers?

Key Points

- 1

V0/Vzero is framed as a frontend-first coding model that produces consistently strong, responsive UI output and is usable via an API.

- 2

Vercel’s advantage is tied to both large code input/output data and explicit user feedback signals like thumbs up/down and retries, which can refine model behavior toward developer taste.

- 3

V0 is described as compatible with the OpenAI chat completions API format and built for modern web application development with fast streaming responses.

- 4

Autofix and Quick Edit are highlighted as workflow-critical features: correcting common issues during generation and streaming inline diffs instead of rewriting whole files.

- 5

Framework-aware completions are emphasized, with particular strength expected for Next.js and Vercel-style stacks, while performance on other frameworks is left as an open question.

- 6

The transcript frames Vercel’s move as strategic: improving reliability for Vercel’s own newer patterns (server components, streaming, AI SDK) and reducing dependence on third-party model providers.

- 7

Cost is a major adoption constraint, with the model described as expensive and potentially requiring bring-your-own-key or premium usage in chat products.