Versioning Data for Machine Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Model rollback requires a reliable mapping from deployed code to the exact training dataset used, not just a Git commit.

Briefing

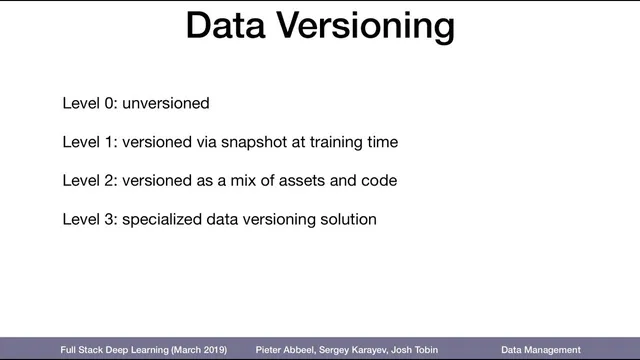

Data versioning is treated as a ladder of increasing sophistication—because deployed machine learning models depend on both code and the exact data they were trained on, and teams eventually need to roll back when things break. At the simplest level, data sits on a file system or in S3 without versioning; training on Tuesday uses whatever data exists then, and training on Friday uses whatever exists then. The trouble shows up during deployment: if the deployed model fails and the team needs to revert, the lack of data history makes it hard to reproduce the prior training set, even though the model itself is versioned.

A more reliable approach is to snapshot the dataset and store it alongside the code version. In practice, that means packaging the full training data at a given time, storing it somewhere durable, and recording a pointer to that snapshot when training and deploying. With this method, a deployment can reference both the Git commit for the code and a specific data snapshot date (or identifier), enabling a rollback to a known-good combination. The workflow still has friction—manual snapshotting can feel “super hacking”—which motivates a better pattern: version data as a mix of assets and code.

Level 2 centers on keeping large raw assets in object storage while versioning the *references* and metadata in Git. For image processing or speech recognition, the actual audio files or images can live in S3 under unique IDs. The training dataset then becomes a structured list of those IDs plus labels and other metadata stored in JSON or similar formats that Git can track. As long as the underlying S3 objects aren’t deleted, checking out an earlier Git revision effectively reconstructs the dataset “as of” that point in time. Because JSON manifests can grow huge, the transcript flags Git LFS (Git Large File Storage) as a way to handle large files similarly to code.

This scheme raises a future-proofing question: what happens when systems evolve—database schemas change, or data gets deleted? The answer depends on application constraints. In education-focused use cases with strict privacy rules, deletion requests must be honored, so retaining old data for future training isn’t allowed; in those environments, the “right place” to enforce correctness is upstream via contracts, tests, and alerts, or by using S3 versioning so files aren’t removed—only new versions are added. That shifts the burden from downstream rollback to earlier safeguards.

Level 3 moves beyond Git-based reference tracking into specialized data versioning systems. The transcript names DBC, Pachyderm, and Quill, with DBC highlighted as especially promising. DBC automates the linkage between data additions and stored artifacts in S3, and it records transformations (for example, converting an XML dataset into a training-ready CSV) so the pipeline and provenance can be retrieved later. The takeaway is pragmatic: try Level 2 first, then evaluate Level 3 if the operational overhead or provenance needs justify it.

Cornell Notes

Machine learning deployments require the exact training data used alongside the code version, or rollback becomes unreliable. Level 0 skips data versioning entirely, which breaks reproducibility when a bad model must be reverted. Level 1 uses dataset snapshots stored externally and records pointers to those snapshots with the code version, enabling rollback to a known-good code+data pair. Level 2 improves this by storing large raw assets in S3 (using stable IDs) while versioning the dataset manifest—IDs plus labels/metadata—in Git, often with Git LFS for large files. Level 3 introduces specialized tools like DBC to automatically track data provenance and transformation pipelines, but it’s recommended to adopt Level 2 before moving to Level 3.

Why does skipping data versioning (Level 0) make model rollback difficult?

How does Level 1 make deployments reproducible?

What does Level 2 change about the way datasets are versioned?

Why is Git LFS relevant in Level 2?

How do privacy and retention rules affect the “future-proofing” of Level 2?

What extra capability does Level 3 add, and what tool example is given?

Review Questions

- What specific failure mode occurs during rollback when code is versioned but the training data is not?

- In Level 2, what exactly is versioned in Git, and what is stored in S3?

- How do privacy deletion requirements change the recommended strategy for data versioning?

Key Points

- 1

Model rollback requires a reliable mapping from deployed code to the exact training dataset used, not just a Git commit.

- 2

Level 0 (no data versioning) makes reproducibility fragile because training depends on whatever data happens to exist at the time.

- 3

Level 1 improves rollback by snapshotting the dataset and recording a snapshot reference alongside the code version.

- 4

Level 2 version-controls dataset manifests (S3 object IDs plus labels/metadata) in Git, while storing raw assets in S3.

- 5

Git LFS helps manage large dataset-related files that would otherwise be unwieldy in standard Git.

- 6

Future-proofing depends on retention and schema-change constraints; upstream contracts/tests or S3 versioning can prevent silent drift.

- 7

Level 3 tools like DBC automate provenance and transformation pipeline tracking, but Level 2 is the recommended starting point.