What makes a great math explanation? | SoME2 results

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Algorithmic peer review can do more than rank work; it can synchronize uploads and create shared viewer bases that boost reach.

Briefing

A peer-review contest for math lessons has turned into a measurable engine for audience growth—and the winning entries point to a practical checklist for what makes math explanations worth watching. After the Summer of Math Exposition submission deadline, an algorithm paired videos for side-by-side comparisons, generating more than 10,000 judgments. That process didn’t just rank submissions; it also encouraged hundreds of creators to post around the same time and then watch each other’s work, helping young channels collectively rack up over 7 million views for this year’s video entries alone. Earlier submissions from last year’s contest added roughly another 2 million views in the same period, and at least one creator reported a dramatic jump in views during the peer-review window even without being directly reviewed.

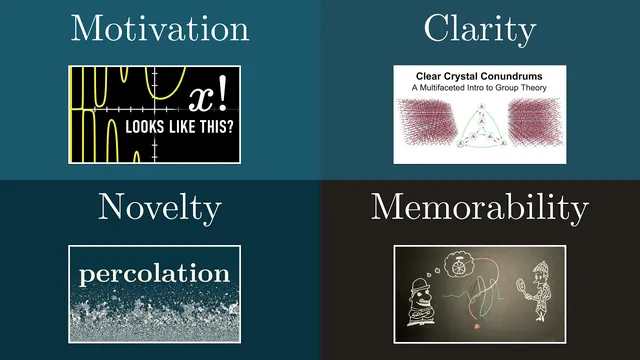

Against that backdrop, the contest organizers selected five winners from more than 100 top-ranked entries, with additional community judges brought in to reduce bias. The selection criteria were four pillars: motivation, clarity, novelty, and memorability. Motivation operates on two levels. At the “macro” level, the best lessons hook learners by raising a compelling question—whether it’s a real-world puzzle like why a plane’s sound seems to drop in pitch, a hands-on challenge such as reverse-engineering the math behind smartphone panorama stitching, or a “nerd-sniping” problem like the prisoner hat riddle that asks for a strategy guaranteeing at least one correct guess. Motivation can also come from historical context, such as a calculus overview that makes students feel their confusions are part of a long tradition of grappling with infinity and infinitesimals, or from connecting seemingly unrelated paradoxes to a shared pattern that later supports category theory.

At the “micro” level, motivation means each new technical idea earns its place. Strong entries preview the intuition and visual scaffolding before introducing heavy machinery—like ray tracing concepts that arrive only after the rendering equation’s meaning is already grounded in examples. Others start from what students find unsatisfying (for instance, the “from on high” feel of the gamma function’s integral definition) and then build toward the final form by deriving it from desired properties. A recurring technique is to begin with a naive approach, show why it fails, and refine step by step—turning confusing concepts like dual numbers and automatic differentiation into logical upgrades rather than sudden leaps.

Clarity then determines how efficiently that attention is spent. The strongest explanations keep one or two concrete examples in view, let learners “play” with patterns through simulations or tweaks, and only generalize after intuition has formed. Even production choices matter: music can enrich storytelling, but it should not compete with technical focus.

Novelty splits into stylistic originality and substantive uniqueness. The contest’s entries use shared tools like Manum, but still aim for distinct voices. More importantly, novelty means presenting material that would be hard to find elsewhere—such as a percolation toy model that makes phase-change proofs more tractable.

Memorability is the final filter: lessons that land a satisfying “aha” or a question that stays with viewers. The five winners include a collaboration slot going to the percolation video, plus crystal structures via group theory, a history of calculus, ray tracing and speed-up algorithms, and the hat riddle problem-solving lesson. Each winner receives $1000 and a rare edition golden pie creature from Brilliant.

Cornell Notes

A math-lesson contest used algorithmic peer review to create a shared posting window, driving millions of views and accelerating cross-audience discovery. From the top entries, organizers picked winners using four criteria: motivation, clarity, novelty, and memorability. Motivation works both globally (hook the learner with a real question, historical stakes, or interactive challenge) and locally (each new technical step should feel earned through intuition, examples, or “fixing” a flawed approach). Clarity favors concrete examples, guided attention, and efficient generalization; novelty rewards both distinct presentation and genuinely hard-to-find topics or perspectives. Memorability comes from satisfying aha moments or unusually fun problems that linger after the lesson ends.

How did the contest’s peer-review process change creator behavior and audience growth?

What counts as “macro motivation” in a math explanation?

What does “micro motivation” look like when introducing new technical ideas?

How do clarity-focused lessons typically manage examples and attention?

What distinguishes the two meanings of novelty in math teaching?

What makes a lesson memorable, according to the organizers’ criteria?

Review Questions

- Pick one example of macro motivation from the criteria list and explain why it would keep a learner engaged before any technical content appears.

- Describe a micro-motivation technique (intuition-first, properties-first, or naive-to-refined). Which one best supports learning for difficult definitions, and why?

- How would you redesign a confusing math explanation to improve clarity without adding more content? Mention concrete-example strategy and attention management.

Key Points

- 1

Algorithmic peer review can do more than rank work; it can synchronize uploads and create shared viewer bases that boost reach.

- 2

Motivation should be engineered at two scales: a strong overall hook and earned local transitions into each new technical idea.

- 3

Clarity improves when concrete examples stay visible while general rules are delayed until intuition forms.

- 4

Novelty matters both in presentation (distinct voice) and in substance (rare topics, unique perspectives, or proof-friendly models).

- 5

Memorability often comes from a question that feels intrinsically fun or a moment of insight that resolves a tension.

- 6

Community judging and careful selection reduce the risk of one person’s taste dominating winner choices.

- 7

Even production elements like background music can affect clarity during dense technical sections.