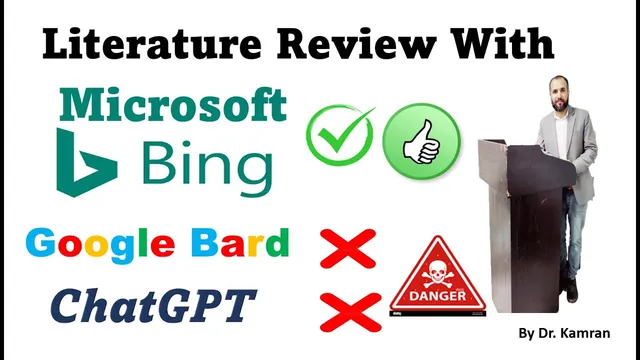

Which one is Best for literature review? ChatGPT or Bard or Bing

Based on Research and Analysis's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Verify every AI-generated citation in Google Scholar before using it in a literature review.

Briefing

Choosing an AI tool for a literature review comes down to one practical test: whether the citations it generates actually exist in academic databases. In a side-by-side experiment on the topic “the relationship between green HRM and employee innovative behavior,” Microsoft Bing produced references that matched what could be found in Google Scholar, while ChatGPT and Google Bard produced multiple citations that failed verification.

The process began with ChatGPT. After generating a literature review and a bibliography, the references were checked one by one in Google Scholar. Several citations could not be located at all—examples included entries that “do not even exist anywhere” in Scholar—suggesting the tool sometimes fabricates or otherwise mis-specifies bibliographic details. Only some references were found, meaning the bibliography was incomplete or unreliable enough that a researcher would have to treat it with caution.

Google Bard was tested next using the same prompt and the same requirement to provide a bibliography. Bard returned fewer references than expected, and when those citations were checked in Google Scholar, at least one of the two provided references could be found while the other could not. The mismatch between the bibliography content and what Scholar returned reinforced the same concern: Bard’s citation list did not consistently correspond to verifiable sources.

Microsoft Bing served as the final comparison. Bing generated a literature review with a bibliography and, crucially, the two provided references were both successfully located in Google Scholar. The verification step worked cleanly for Bing’s citations, making it the most dependable option among the three in this specific test.

The experiment also added a timing angle. Bing’s citations were described as “latest,” whereas ChatGPT was characterized as more likely to rely on information available before 2022. That distinction matters because literature reviews depend on both accuracy and recency; a tool that returns verifiable, up-to-date references reduces the manual burden of hunting for sources.

The bottom line: for AI-assisted literature reviews, Microsoft Bing outperformed ChatGPT and Google Bard in citation reliability during this check. Even so, the recommendation is not to accept AI-generated bibliographies blindly. The safest workflow is to double-check every citation in Google Scholar (or another database) before using it in a final literature review, regardless of which tool is used.

Cornell Notes

An accuracy check of AI-generated citations found that Microsoft Bing produced a bibliography that matched entries in Google Scholar for a literature review on “green HRM” and “employee innovative behavior.” ChatGPT generated multiple references that could not be found in Google Scholar, indicating unreliable citation details. Google Bard also showed partial failure, with fewer references returned and at least one citation not appearing in Scholar. The practical takeaway is that citation verification is essential, but Bing performed best in this specific test. Recency may also favor Bing, while ChatGPT was described as more likely to draw from older data.

What criterion determined which AI tool was “best” for a literature review?

Why did ChatGPT perform poorly in the citation verification step?

How did Google Bard’s citation output compare to ChatGPT’s?

What made Microsoft Bing the most reliable option in this test?

What role did “latest references” play in the conclusion?

Review Questions

- When evaluating AI tools for literature reviews, what specific verification step should be performed before accepting citations?

- Which tool produced citations that failed to appear in Google Scholar, and what does that imply about using AI-generated bibliographies?

- How did the number of references returned by Google Bard affect the reliability assessment in this experiment?

Key Points

- 1

Verify every AI-generated citation in Google Scholar before using it in a literature review.

- 2

ChatGPT produced multiple references that could not be found in Google Scholar, indicating unreliable citation accuracy.

- 3

Google Bard returned fewer references and still produced at least one citation that did not appear in Google Scholar.

- 4

Microsoft Bing produced citations that matched entries in Google Scholar for the tested topic, making it the most reliable in this comparison.

- 5

Recency may differ by tool; Bing was described as providing more recent references than ChatGPT.

- 6

Even when a tool performs well on citation checks, researchers should still double-check each source to avoid errors.