Why “probability of 0” does not mean “impossible” | Probabilities of probabilities, part 2

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

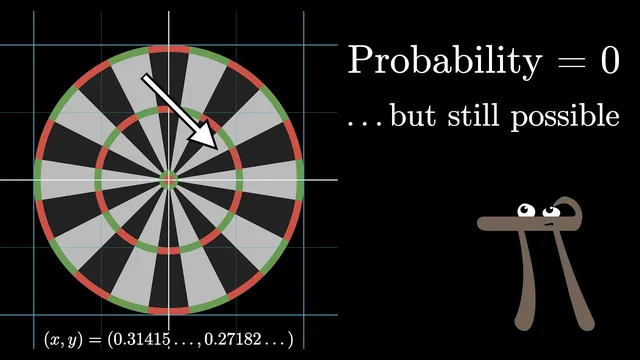

In continuous probability, exact point probabilities like P(h = 0.7) are 0, even though the parameter still has total probability 1 across all values.

Briefing

Assigning a nonzero probability to every exact real value of an unknown parameter leads to a paradox: there are uncountably many candidate values, so either the total probability “blows up” if each point gets some positive weight, or it collapses to zero if each point gets probability 0. The fix is to stop treating individual real numbers as the basic units of probability and instead treat intervals (ranges) as the fundamental objects.

The setup begins with a weighted coin whose true probability of heads is an unknown number h between 0 and 1. After observing 10 tosses with 7 heads, the natural question becomes: what is the probability that h equals 0.7? Phrased that way, the question is “probability of a probability,” and it also demands an answer about a single point in a continuum. In a continuous setting, any specific exact value like h = 0.7 has probability 0, even though the overall parameter h still has total probability 1 across all possibilities. If one tries to assign nonzero probability to each exact value, the sum over infinitely many points cannot remain finite.

The resolution comes from coarse-graining: group possible values of h into buckets, such as 0.80–0.85, and ask for the probability that h falls inside each bucket. Crucially, the probability is represented by the area of each bar, not its height. As buckets get narrower, the probability of landing in any single tiny interval shrinks toward 0, but the bar’s height approaches a stable “density” level because the interval width is shrinking. In the limit, the collection of bars becomes a smooth curve: the probability density function (PDF).

This reframes what the vertical axis means. The height is not a probability; it is probability per unit length along the h-axis. The total probability remains 1 because the total area under the curve across [0, 1] stays fixed. Under this rule, the probability that h lies between two values a and b is the area under the PDF between a and b. A single point corresponds to an interval of width 0, so its probability is 0, while the probabilities of all intervals together still sum to 1.

The shift is not just a visualization trick; it reflects a change in the underlying “rules” for continuous probability. In discrete cases, probabilities of individual outcomes can be added. In continuous cases, probabilities of ranges are the primitive quantities, and individual points are treated as degenerate ranges. Measure theory provides the rigorous framework that unifies these discrete and continuous viewpoints by defining how probability assignments behave across sets.

With that foundation in place, the original coin question becomes well-posed: after observing tosses, the goal is to find the PDF for h. Once that density is known, it becomes straightforward to answer questions like the probability that the true heads probability lies between 0.6 and 0.8. The next step is to compute that posterior density from the observed data.

Cornell Notes

The paradox of “probability of 0” comes from trying to assign probability to exact real values of an unknown parameter h. In a continuum, each exact value (like h = 0.7) has probability 0, yet the parameter still has total probability 1 across all possibilities. The cure is to treat intervals as the basic units: build buckets for h, and represent probability by bar area (width × height). As buckets get finer, the bars’ heights converge to a probability density function (PDF), where probabilities for ranges equal the area under the curve. This makes statements about h precise—e.g., P(0.6 ≤ h ≤ 0.8) is an area—while point probabilities remain 0.

Why does asking for P(h = 0.7) create a paradox in continuous settings?

How does “probability as area” resolve the issue?

What does the height of a PDF mean if it’s not a probability?

How do continuous probability rules differ from discrete ones?

What role does measure theory play in making this rigorous?

After observing coin tosses, what is the correct target object to compute?

Review Questions

- In a continuum, why must probabilities of exact values be treated differently from probabilities of intervals?

- Explain why representing probability by bar height fails as buckets get infinitely thin, and why representing probability by bar area succeeds.

- Given a PDF for h on [0, 1], how would you compute P(0.6 ≤ h ≤ 0.8) and why is P(h = 0.7) equal to 0?

Key Points

- 1

In continuous probability, exact point probabilities like P(h = 0.7) are 0, even though the parameter still has total probability 1 across all values.

- 2

The basic units of probability in a continuum are intervals (ranges), not individual real numbers.

- 3

Represent probability for a range by the area under a curve; the PDF’s height is density, not probability.

- 4

As bins get narrower, bucket probabilities shrink but density heights stabilize, producing a smooth PDF in the limit.

- 5

Probability for a range [a, b] equals the area under the PDF between a and b; a single point has zero-width area.

- 6

Discrete “sum of probabilities” logic changes to continuous “integral of densities” logic because the underlying rule system is different.

- 7

Measure theory provides the rigorous framework that unifies discrete and continuous probability assignments.