Words to Bytes: Exploring Language Tokenizations | Sam Gbafa | OpenAI Scholars Demo Day 2021

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Tokenization granularity (words, subwords, characters, bytes) can significantly affect language model learning outcomes even when the model architecture and training budget are held constant.

Briefing

Language tokenization choices can materially change how well a language model learns, but the “best” granularity depends on data size and model capacity. Sam Gbafa’s project tested word-, subword-, character-, and byte-level tokenizations using the same decoder-only transformer setup and found that finer segmentations don’t automatically win—especially when the training corpus is relatively small.

Gbafa built a baseline around standard autoregressive sequence modeling: the model predicts the next token from prior context, learning statistical relationships from a corpus such as Wikipedia. The project then zoomed in on tokenization as a key lever for sequence models. Prior work suggested that finer-grained tokenizations can outperform coarser ones, and that learning segmentations can improve generalization. To test those claims directly, Gbafa trained tokenizers on the same dataset (Wall Street Journal and Wikipedia articles) and compared how different token granularities affected training perplexity and validation behavior.

In concrete terms, word tokenization splits on whitespace, subword tokenization breaks words into smaller pieces (for example, “swimming” into “swim” and “-ming”), character tokenization splits text into individual characters, and byte tokenization represents characters via their underlying byte encodings (Unicode characters can take one to four bytes; for English, many characters map to one byte). The experiments used a 12-layer decoder-only transformer with about 80 million parameters, constant compute, and the same context length across tokenization schemes. Vocabulary sizes differed by design: word tokenization learned roughly 10,000 vocabulary words, subword tokenization used a 40,000 vocabulary, while character tokenization learned a much smaller set of unique characters.

The results complicated the expectation that subwords would always beat characters. While subword perplexity trends were not consistently superior, character tokenization performed better in this particular setup. Gbafa attributed the mismatch partly to the dataset scale: with a relatively small corpus, a 40,000 subword vocabulary can be too large, leaving many subword units undertrained. Validation perplexity was also high in at least one run, suggesting overfitting and weak generalization rather than a clean separation of tokenization quality.

The project’s takeaways were practical. Smaller segmentations can encode more nuanced information, but they may require a larger model to build useful representations across layers—transformers often construct word-level meaning gradually, and character-level modeling may need more capacity and training signal. Context length also matters: representing the same amount of information with smaller tokens effectively demands longer contexts. Gbafa further emphasized that the number of subword units is a critical hyperparameter, so tokenization sweeps should include multiple subword vocab sizes, and byte-level approaches likely need larger and more diverse multilingual data to pay off.

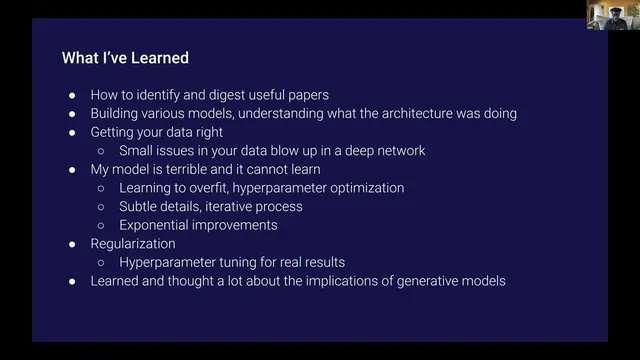

Beyond the experiments, Gbafa described learning outcomes from the OpenAI Scholars program: how to extract value from research papers, how data issues can derail training, and how overfitting, regularization, and hyperparameter optimization drive iterative improvements. The work also prompted reflection on the societal implications of generative models, including their effects on democracy and public trust.

Cornell Notes

Sam Gbafa’s project tested how tokenization granularity affects an autoregressive language model’s learning. Using a 12-layer, ~80M-parameter decoder-only transformer with constant compute and context length, he compared word, subword, character, and byte tokenizations trained on Wall Street Journal and Wikipedia. Contrary to the expectation that subwords always outperform, character tokenization performed better in this setup, likely because the training corpus was small relative to the 40,000 subword vocabulary. The findings suggest that finer segmentations require enough data, appropriate subword vocabulary sizing, and often more model capacity and/or longer context to generalize well. The work also highlights that tokenization hyperparameters and dataset scale jointly determine performance, not granularity alone.

Why does tokenization granularity matter for an autoregressive language model?

What experimental setup did Gbafa keep constant, and why is that important?

What did the project find about subword vs. character tokenization?

How do vocabulary size and dataset size interact in these results?

Why might smaller tokens require longer context or more capacity?

How does this work connect to multimodal modeling and segmentation learning?

Review Questions

- How would you expect training perplexity and validation perplexity to change if you increase subword vocabulary size without increasing dataset size?

- What trade-offs arise when switching from word tokenization to character or byte tokenization in terms of sequence length, context requirements, and model capacity?

- Which experimental controls in Gbafa’s setup help isolate the effect of tokenization, and which variables might still confound the comparison?

Key Points

- 1

Tokenization granularity (words, subwords, characters, bytes) can significantly affect language model learning outcomes even when the model architecture and training budget are held constant.

- 2

Finer tokenization does not guarantee better performance; in Gbafa’s experiments, character tokenization outperformed subword tokenization under a small-corpus regime.

- 3

A large subword vocabulary (e.g., 40,000) can underperform when training data is limited, because many subword units remain poorly learned.

- 4

Smaller tokens often require longer context length to represent the same amount of information and may need more model capacity to build higher-level representations.

- 5

Subword vocabulary size is a critical hyperparameter; meaningful comparisons should sweep multiple subword tokenizers rather than rely on a single segmentation scheme.

- 6

Byte-level tokenization is closely tied to Unicode encoding and may be more promising with larger, more diverse multilingual data rather than English-only corpora.

- 7

Training stability and generalization are strongly influenced by overfitting and regularization, so validation behavior is as important as training perplexity.