Write the Docs Portland 2017: Even Naming This Talk Is Hard by Ruthie BenDor

Based on Write the Docs's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Names function as the first organizing label for new abstractions, so mismatches between a name and a reader’s mental model create real confusion.

Briefing

Naming in software is hard because names are guesswork that must fit human mental models—and when they miss, the damage shows up as confusion, frustration, and avoidable technical debt. Ruthie BenDor frames naming as one of the two “hard problems” in computer science (alongside cache invalidation), then argues that the real issue isn’t just cleverness or taste. Software is built largely by extending and amending other people’s work, so every new abstraction arrives with a name that becomes the “sticky note” on the filing system in someone’s head. If that label doesn’t map cleanly to what the abstraction does—or clashes with a reader’s context—people don’t just misunderstand; they start doubting their own competence and stop trusting the codebase.

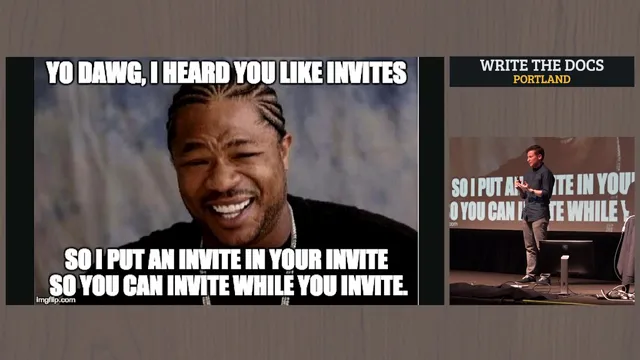

Several concrete examples illustrate how naming fails when teams reuse words without respecting the meaning they carry. In one story, an events product avoids the heavy feel of “event” by calling them “invites,” only to end up with an email method that sends “invites” to people who have been “invited to an invite.” In another, Redux’s “containers” collide with a waste-hauling industry where “dumpster” is a loaded term; the result is a concept that feels obvious to one domain and jarring to another. BenDor also points to the broader problem of descriptive naming: reducing a complex concept to a single word inevitably drops nuance, and the name then evokes unknown assumptions in readers who may not share the same context.

She adds that naming mistakes persist because teams often don’t experience the pain directly. Bad names can become an empathy failure (“it doesn’t affect me”), a beginner’s mind failure (the author forgets what it was like not to understand), or a localization failure (people don’t recognize that the term carries emotional or cultural baggage). She cites “blacklist” and “blocklist” as an example where “neutral” technical terminology can still trigger strong connotations, and notes that standards bodies have moved toward “allow lists” and “block lists.”

Beyond emotional and contextual issues, BenDor argues that the first name is often wrong because teams don’t yet know the abstraction’s essence. She uses a Hitchhiker’s Guide to the Galaxy analogy: like a whale materializing in an impossible place, early projects make do with provisional names while the real shape of the work emerges. That guesswork is also why technical debt and refactoring are unavoidable: only after building do teams learn what the software is meant to do and whether the naming—and the design behind it—fits.

So what makes a name “good”? BenDor treats it as a balancing act and a judgment call: names shouldn’t be too long or too short, should follow style guides, avoid unnecessary ambiguity, and should be easy to pronounce and memorable without being misleading. Good names create “aha moments” by connecting an abstract concept to something tangible and setting correct expectations. Tools can help with mechanics—searching for collisions, enforcing style, catching inconsistencies—but they can’t replace the human judgment required for the hard part. Her closing message is practical: developers should treat naming as part of the craft, documentarians should flag missed marks, and everyone should remember that a bad name isn’t personal—it’s an opportunity to improve shared understanding.

Cornell Notes

Ruthie BenDor argues that software naming is difficult because names are the first “mental sticky notes” people use to file new abstractions, and those labels must match human context. Naming is guesswork early in a project, when teams don’t yet know the abstraction’s true essence, so the first label often becomes wrong and later work accumulates around it. Bad names persist due to empathy failures, beginner’s mind fading, cultural or emotional blind spots, and reluctance to pay the cost of renaming. A good name is a balancing act—clear enough to set correct expectations, not overly long, consistent with style, and memorable without being misleading. Since tools can only enforce mechanics, improving names depends on human judgment and feedback loops from developers and documentarians.

Why does BenDor treat naming as a core engineering problem rather than a cosmetic one?

How do the “invites” and Redux “containers” examples show naming pitfalls in practice?

What does BenDor mean by “descriptive naming is hard,” and why can’t one word capture everything?

Why do bad names persist even when teams care about correctness?

How does BenDor connect naming guesswork to technical debt and refactoring?

What criteria does BenDor propose for judging whether a name is good?

Review Questions

- Give two reasons BenDor says naming is guesswork early in a project, and explain how that leads to technical debt.

- Describe at least three failure modes that cause bad names to persist even when teams want quality.

- What makes a name “good” in BenDor’s framework, and how does that differ from what automated tools can do?

Key Points

- 1

Names function as the first organizing label for new abstractions, so mismatches between a name and a reader’s mental model create real confusion.

- 2

Software naming failures often come from context gaps: domain language, emotional connotations, and cultural assumptions can make “technically correct” terms feel wrong.

- 3

A single word can’t capture the full meaning of an abstraction, so descriptive naming inevitably drops nuance and invites incorrect inference.

- 4

Early naming is provisional because teams don’t yet know an abstraction’s essence; later learning exposes mismatches that trigger refactoring needs.

- 5

Bad names persist through empathy failures, beginner’s mind fading, reluctance to pay renaming costs, and underestimating the ROI of better naming.

- 6

Automated tools can improve naming mechanics (style enforcement, collision checks, consistency), but they can’t replace the human judgment required for clarity and expectation-setting.

- 7

Good names create “aha moments” by connecting abstract behavior to tangible expectations and by illuminating what the concept is responsible for.