A brief history of programming...

Based on Fireship's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Binary representation made computation practical by mapping electricity’s on/off behavior to 1s and 0s.

Briefing

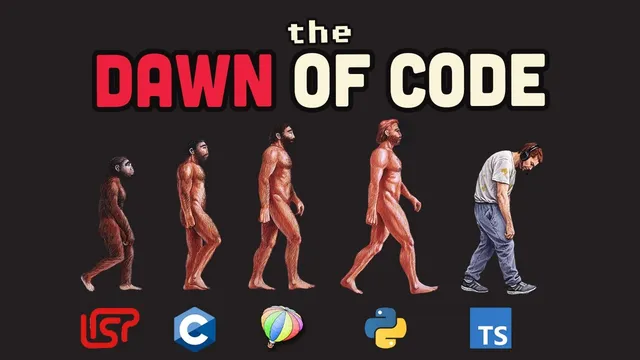

Programming’s origin story starts with binary—electricity behaving like on/off—then accelerates through a chain of inventions that make machines easier to command. Once people realized computers don’t “understand” language but instead react to voltage patterns, the focus shifted to how humans could reliably translate ideas into sequences of ones and zeros. That translation problem—how to express instructions in a form humans can write and machines can execute—drives nearly every major programming milestone, from assembly language to compilers and beyond.

In the early era, computing relied on hardware-level representations: vacuum tubes and punch cards encoded bits, and each number was treated as a binary value. The next leap came when programmers standardized how groups of bits represent larger numbers (counting up to 255 with eight bits), effectively making binary practical for everyday computation. Assembly language then reduced the pain of writing raw bit patterns, even if it still forced developers to think close to the machine. The real turning point arrived with the idea that computers could process readable instructions: a compiler acts like a translator, taking human-friendly code, performing heavy analysis, and outputting machine code that the computer can run without further human involvement.

From there, programming languages diversified fast. Early high-level languages targeted different audiences—scientific work, business, and government—while Lisp introduced a radically different model: code as data, everything as lists, and execution via an interpreter that runs line-by-line. Lisp’s approach also enabled “garbage collection,” reducing the programmer’s burden around memory management. As the field matured, debates hardened into design philosophies. Dijkstra’s push against go-to statements helped shape the culture toward readability and maintainability, while Dennis Ritchie’s C delivered speed and direct memory access—powerful enough to “shoot yourself in the foot,” but foundational for systems software.

C’s influence spread through Unix, where small programs do one job well and connect via pipes, turning the command line into a kind of programming religion. Then C++ layered abstraction on top of C, adding objects, classes, and inheritance—features that fueled major industries like games, browsers, and databases, while also sparking endless arguments. The 1980s and 1990s brought a crowded ecosystem: Turbo Pascal with an integrated development environment, Ada for the military, Erlang for telecom systems, and Smalltalk as an early pure object-oriented language that others copied even if it faded.

The 2000s and 2010s emphasized portability and developer productivity. Python prioritized readability with indentation as a rule; Java pursued “write once, run everywhere” using a virtual machine; JavaScript began as a quick way to animate browser buttons but metastasized into servers, phones, databases, and even spacecraft. The web’s rise made PHP common, while JavaScript frameworks triggered wars over tooling. Later language generations aimed to fix earlier pain points—Swift replacing Objective-C, Kotlin replacing Java, TypeScript replacing JavaScript, and newer systems languages trying to tame the risks of C.

The modern twist is AI-assisted coding. Autocomplete evolved into linters, refactors, and full function generation, leading some to declare programming “dead.” The counterpoint is that the real work has never been typing code—it’s thinking. Programming keeps changing the interface to that thinking, and it will keep evolving as tools and keyboards shift. The current wave, including an AI coding agent integrated into JetBrains IDEs, is framed as another step in that ongoing transformation rather than an end to the craft.

Cornell Notes

Programming’s evolution tracks a single problem: turning human intent into machine-executable instructions. Binary made computation possible, but writing raw bit patterns was impractical, so assembly language and then compilers emerged to translate readable code into machine code. Language design then split into competing priorities—low-level control (C), readability and maintainability (Python, anti–go-to culture), portability (Java’s virtual machine), and rapid web interactivity (JavaScript). Lisp’s “code as data” and interpreter model enabled features like garbage collection, while Unix popularized the idea of small programs connected by pipes. Today’s AI coding tools automate more typing and structure, but the core job remains thinking, not keystrokes.

Why did binary become the foundation of programming, and what changed once electricity arrived?

What is the practical leap from assembly language to compilers?

How did Lisp’s design differ from mainstream approaches, and what did it enable?

Why did C become so influential despite its risks?

How did JavaScript’s origin as a “temporary” browser language lead to a full-stack ecosystem?

What’s the argument against “programming is dead” in the AI era?

Review Questions

- Which programming milestone most directly reduced the need to manually translate instructions into machine code, and why?

- Compare two language design philosophies mentioned here (e.g., readability vs portability). What concrete mechanisms support each?

- How does the “programming is dead” claim get challenged, and what does the alternative definition of the job emphasize?

Key Points

- 1

Binary representation made computation practical by mapping electricity’s on/off behavior to 1s and 0s.

- 2

Assembly language reduced the pain of writing raw bit patterns, but compilers removed the need for humans to manually translate into machine code.

- 3

Lisp’s “code as data” and interpreter model enabled features like garbage collection and a different way of structuring programs.

- 4

C’s combination of speed and direct memory access made it foundational, and Unix amplified its influence through small tools connected by pipes.

- 5

Language ecosystems repeatedly formed around competing priorities: readability (Python), portability (Java), and rapid web interactivity (JavaScript).

- 6

The web era turned JavaScript into a dominant platform, while framework competition became a major cultural and technical battleground.

- 7

AI-assisted coding automates more keystrokes and scaffolding, but the core work remains thinking, so programming continues to change rather than end.