A Surprising Way Your Brain Is Wired

Based on Artem Kirsanov's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

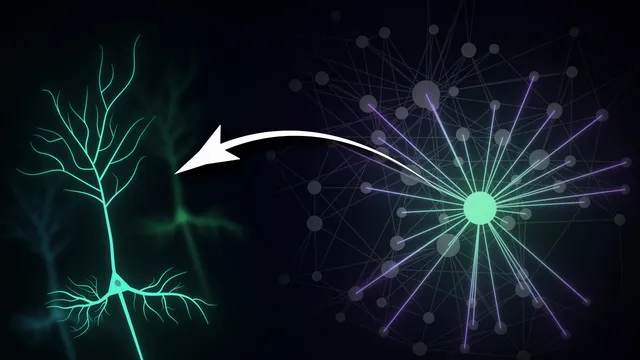

Small-world networks achieve efficient global communication without sacrificing local specialization by combining short average path lengths with high clustering.

Briefing

Small-world network architecture—high local clustering paired with short global path lengths—offers a practical blueprint for how brains (and many other complex systems) balance specialization with fast integration. The core insight is that you don’t need to connect everything to everything to get efficient communication. Instead, networks can preserve tightly knit local neighborhoods while adding a small number of long-range “shortcuts” that drastically reduce the number of steps needed to travel between distant parts.

In graph terms, nodes represent elements (from neurons to brain regions), and edges represent connections (synapses, white-matter tracts, or functional coordination). Two measurements capture the tradeoff. Average path length quantifies how quickly information can move across the network: fewer hops means better global communication. Clustering coefficient quantifies local “groupiness”: if a node’s neighbors are also connected to each other, the network forms dense local modules. Regular lattices maximize clustering but suffer from long routes across the network, while purely random graphs slash path lengths but largely erase meaningful local structure.

The breakthrough model that bridges this gap is the Watts–Strogatz approach. Starting from a regular lattice, it rewires only a small fraction of connections, creating long-range shortcuts. That small change can preserve most of the original clustering while collapsing average path length toward random-graph levels. Networks that show this combined signature—simultaneously high clustering and short paths—are called small-world networks. The transcript also stresses a common confusion: “small-world” describes the property, while “Watts–Strogatz” is one specific method for generating it. Real brain networks often deviate from the simple rewiring story.

A major reason is the presence of hubs. In real neural connectivity, the distribution of node degrees is heavy-tailed rather than bell-shaped: a minority of nodes (neurons or brain regions) have far more connections than the rest. These hubs act as bridges between processing modules, enabling efficient global communication without sacrificing local specialization. Evidence is cited across scales, including the mapped connectome of the worm C. elegans and human brain regions such as the locus coeruleus, described as a major noradrenaline source that modulates arousal and attention across widespread circuitry.

Evolutionary pressure helps explain why this architecture keeps recurring. Brains need parallel local processing—specialized circuits for different features in vision, touch maps in somatosensory cortex, and distinct motor circuits—so dense local clustering supports modules that can work together with minimal interference. At the same time, coherent perception and action require rapid cross-talk between distant modules, which short paths and hub-mediated shortcuts provide. This comes with a cost: long-range wiring consumes space and energy, so the brain must maximize computational power while minimizing wiring length.

Finally, small-world organization improves robustness. Dense local connectivity creates redundancy, so failure of individual neurons often has limited impact because alternative routes exist. Yet hubs are vulnerability points: damage to highly connected nodes can propagate widely, helping account for why certain disorders can produce diverse symptoms when key hub regions are affected. Overall, small-world networks are presented as a universal compromise—local specialization plus global integration, tuned to biological constraints and resilience.

Cornell Notes

Small-world networks combine two features that are hard to achieve at once: high local clustering (neighbors of a node are often connected to each other) and short global path lengths (few steps are needed to reach distant nodes). The Watts–Strogatz model shows how this balance can emerge by rewiring only a small fraction of edges in an otherwise regular lattice, adding long-range shortcuts without destroying local structure. Real brain networks often go beyond this simple model because they include hubs—nodes with unusually high degree—creating a heavy-tailed connectivity pattern rather than a uniform one. This architecture supports specialized local processing, rapid integration across distant regions, and robustness to random failures, while concentrating risk around hub damage.

What do “average path length” and “clustering coefficient” measure, and why do they matter for brain-like systems?

How does the Watts–Strogatz model create small-world behavior without fully randomizing the network?

Why is “small-world” not the same thing as “Watts–Strogatz”?

What are hubs, and how do they change the connectivity picture compared with a simple small-world model?

How does small-world architecture support both specialized processing and fast integration in the brain?

Why does small-world connectivity improve robustness, and why can hub damage be especially harmful?

Review Questions

- How would average path length and clustering coefficient change when moving from a regular lattice to a Watts–Strogatz rewired network?

- What evidence in the transcript supports the claim that real brain networks include hubs rather than matching a uniform degree pattern?

- Why does wiring cost limit the brain from connecting every node to every other node, even if that would maximize communication efficiency?

Key Points

- 1

Small-world networks achieve efficient global communication without sacrificing local specialization by combining short average path lengths with high clustering.

- 2

The Watts–Strogatz model produces small-world behavior by rewiring only a small fraction of edges in a regular lattice, creating long-range shortcuts.

- 3

“Small-worldness” describes the network property, while “Watts–Strogatz” is one specific method for generating it; real brain networks often require additional features.

- 4

Real neural connectivity tends to show heavy-tailed degree distributions, meaning a minority of hubs provide major bridging roles between modules.

- 5

Small-world organization supports parallel local processing (dense modules) and rapid cross-module integration (short paths and hub shortcuts).

- 6

Long-range wiring has real biological costs—space and energy—so evolution favors architectures that balance performance with wiring constraints.

- 7

Dense local connectivity can make networks robust to random failures, but hub damage can cause widespread disruption.