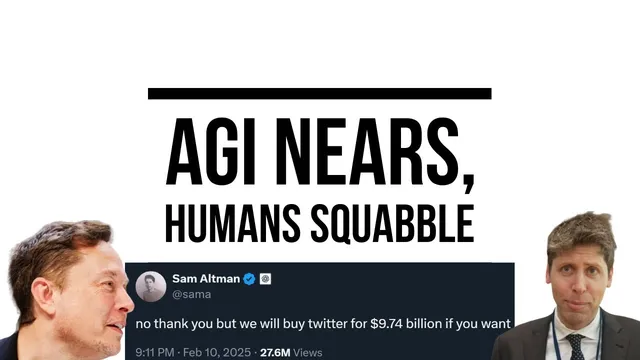

AGI: (gets close), Humans: ‘Who Gets to Own it?’

Based on AI Explained's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Control of AGI—and the wealth it generates—is framed as the dominant near-term conflict, with legal and corporate battles already underway around OpenAI’s nonprofit structure.

Briefing

The central fight emerging alongside rapid progress toward AGI isn’t technical—it’s control of the systems and the wealth they generate. As AI capabilities rise, competing visions clash over who gets to own the models, who benefits from automation, and how governments (and rival labs) respond when labor’s bargaining power erodes. That power struggle is already playing out in public: Elon Musk and allies have challenged Sam Altman and Microsoft for control of OpenAI’s nonprofit structure, while OpenAI’s leadership frames such moves as weakening tactics. The stakes are framed as enormous—Sam Altman has floated scenarios where AGI could capture and redistribute wealth on the scale of trillions—yet the practical mechanism for “benefiting all humanity” remains unclear, especially if most human labor becomes redundant.

On the technical side, the path to “human-level” problem solving is getting narrower and more measurable. The discussion leans on Sam Altman’s shifting definition of AGI: a system that can tackle increasingly complex problems across many fields at human level. Under that lens, coding and research-like tasks look closer than imitation alone. Coding benchmarks are cited to show models moving beyond ranking among top competitors toward reinforcement-learning-driven exploration—trying approaches, learning from outcomes, and not merely copying top solutions. Medical-style reasoning is also used as an example: OpenAI’s Deep Research is described as producing plausible diagnoses that a clinician might not have anticipated, even while acknowledging frequent hallucinations.

A key claim is that the “data wall” may not be the limiting factor. Instead, tasks can be expanded indefinitely through post-training and reinforcement learning, including web search, operating a computer, and completing real-world workflows. If tasks are verifiable—like code outputs or spreadsheet actions—reinforcement learning can turn them into training signals. That shifts attention to another bottleneck: evaluation. Even if models can be trained on vast task sets, measuring progress reliably and safely becomes the gating item.

Investment dynamics also enter the picture. Scaling laws are summarized as roughly intelligence improving with the logarithm of compute resources, but the socioeconomic value of each incremental intelligence gain is argued to be super-exponential. If returns keep compounding, the incentive to keep spending on compute and training doesn’t naturally stop. That logic underpins why labs and governments may race rather than pause.

The transcript then widens to geopolitical and institutional risk. RAND’s concerns are highlighted around authoritarian surveillance, loss of autonomy, and “wonder weapons,” alongside the uncomfortable question of what a decisive AI advantage would actually be used for—economic disruption or outright dominance. There’s also a warning that control may not stay with states or companies: cheaper “frontier-like” reasoning could emerge from small models using clever inference-time scaling. A Stanford-linked approach is described as achieving strong performance on hard math and science question sets using a test-time scaling method—forcing models to continue reasoning via repeated “weight” tokens—along with careful filtering and diversity selection of a small training set.

Taken together, the message is that AGI progress is accelerating on multiple fronts, but the more urgent question is governance: how to prevent a world where automation concentrates power, destabilizes societies, and turns AI advantage into economic and political leverage—possibly faster than institutions can respond.

Cornell Notes

AGI progress is advancing through reinforcement learning on increasingly complex, verifiable tasks, while the bigger crisis is control: who owns the systems and who benefits from automation. The discussion ties “human-level” capability to measurable domains like coding and research-style reasoning, arguing that the limiting factor may be evaluation rather than data. Scaling laws are presented as implying that investment incentives won’t naturally slow down, since each incremental intelligence gain can yield disproportionately large value. Governance concerns then shift to labor displacement, wealth redistribution, and geopolitical competition, including fears that AI advantage could be used to weaken rivals’ economies. Finally, a Stanford-described method suggests that strong reasoning can be achieved with relatively small models by spending more compute at inference time (“test-time scaling”).

Why does the transcript treat “evaluation” as a likely bottleneck even if training tasks can expand indefinitely?

What does “test-time scaling” mean in the Stanford-described approach, and why does it matter?

How does the transcript connect scaling laws to investment incentives?

What governance conflict is highlighted around OpenAI’s nonprofit structure?

Why does the transcript argue that “benefits all humanity” is hard to guarantee?

How does the transcript use benchmark examples to support the claim that systems are moving beyond imitation?

Review Questions

- Which bottleneck does the transcript claim becomes more important once models saturate many benchmarks, and what evidence is used to support that claim?

- Explain how “test-time scaling” differs from traditional training-time scaling, and what mechanism forces the model to keep reasoning.

- What redistribution and governance problems arise when most labor becomes redundant, and how does the transcript connect those problems to geopolitical competition?

Key Points

- 1

Control of AGI—and the wealth it generates—is framed as the dominant near-term conflict, with legal and corporate battles already underway around OpenAI’s nonprofit structure.

- 2

Reinforcement learning on verifiable tasks is presented as a major driver of capability gains, potentially reducing the importance of a “data wall.”

- 3

As benchmark performance saturates, evaluation quality becomes a central bottleneck for determining whether models are truly improving general reasoning.

- 4

Scaling laws imply strong incentives to keep investing in compute and training because each incremental intelligence gain may deliver disproportionately large value.

- 5

Governance risks extend beyond labor displacement to geopolitical competition, including fears that AI advantage could be used to automate and weaken rivals’ economies.

- 6

Inference-time compute (“test-time scaling”) is highlighted as a way to boost reasoning performance, even for smaller models, by forcing longer generation when the model attempts to stop.

- 7

The transcript repeatedly returns to a gap between mission statements (“benefit all humanity”) and the practical challenge of redistribution across nations and under rapid labor redundancy.