Bell's Theorem: The Quantum Venn Diagram Paradox

Based on minutephysics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Polarizing filters convert photon polarization into probabilistic transmission outcomes that depend on the angle between filter axes.

Briefing

Bell’s theorem turns a “quantum Venn diagram” counting puzzle into a hard constraint on any theory that treats measurement outcomes as pre-written and forbids faster-than-light influence. Start with polarized light and ideal polarizing filters: photons pass through with probabilities determined only by the angle between filters. Classical intuition suggests those probabilities should reflect hidden properties the photon carries—so that even before a filter is inserted, each photon already “knows” whether it will pass.

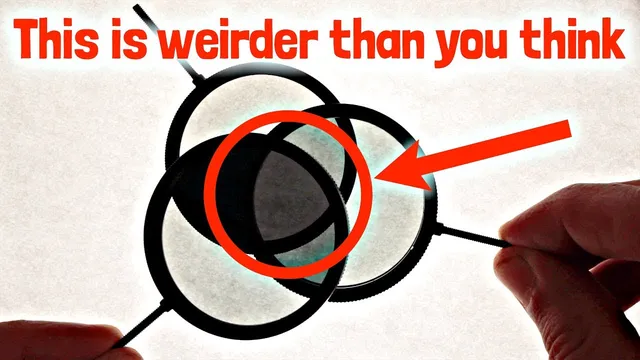

The sunglasses-style setup makes the paradox concrete. With two filters, experiments show near certainty at 0° (same orientation), essentially zero transmission at 90° (perpendicular), and a 50/50 split at 45°. The strange part appears at intermediate angles: at 22.5°, a photon that passes the first filter has about an 85% chance of passing the second. Now insert three filters in a specific sequence: A at 0°, B at 22.5°, and C at 45°. If B is absent, then C blocks about half of the photons that made it through A—so the “blocked at C” fraction is 50%. But with B present, the chain of conditional probabilities implies only about 15% get blocked at B, and then only about 15% of those are blocked at C, yielding a much smaller “blocked at C” fraction. The result is that the same underlying photon population cannot simultaneously satisfy both the “B absent” and “B present” statistics under the assumption that each photon has definite answers to whether it would pass each filter.

To formalize the contradiction, the argument assumes each photon carries hidden variables that predetermine outcomes for three yes/no questions: pass A, pass B, pass C. Those predetermined answers can be represented with overlapping regions in a Venn diagram. The measured 85% and 15% conditional probabilities force tight limits on the sizes of the regions corresponding to “passes A but not B,” “passes B but not C,” and “passes A and blocked at C.” Yet the experiments demand that when B is not measured, half the photons passing A are blocked at C—an outcome that cannot fit inside the region sizes implied by the “B measured” data. This is the counting contradiction: the geometry of definite outcomes can’t reproduce the observed probabilities.

A loophole remains if the photon’s interaction with one filter could change how it interacts with later filters. The entanglement version closes that escape hatch by arranging the measurements so the relevant influences cannot be coordinated by ordinary signals. Two entangled photons are sent to distant locations. At each location, a polarizing filter is chosen randomly among the same orientations (A, B, C). Entanglement ensures strong correlations: when both sites use the same axis, the photons behave in lockstep (both pass or both get blocked). Crucially, the same numerical relationships reappear in the joint data: about 15% conditional mismatches at the 22.5° steps, and a 50% blocked-at-C correlation for the A–C setting.

The entangled counting contradiction implies that no theory can keep both “realism” (definite outcomes exist even when unmeasured) and “locality” (no faster-than-light influence) at the same time. Bell’s theorem packages this into Bell inequalities—simple counting rules that any locally realistic hidden-variable model must satisfy. Quantum mechanics predicts violations, and experiments have increasingly confirmed them. The first loophole-free test came in 2015, after years of technical improvements. The upshot is stark: the universe cannot be locally real in the Bell sense, even though the core logic is just probability bookkeeping made impossible by entanglement.

Cornell Notes

Polarized filters turn photon transmission into angle-dependent probabilities: 0° gives near-certain passage, 90° gives essentially zero, 45° gives 50/50, and 22.5° yields about an 85% conditional chance. Using three filters (A at 0°, B at 22.5°, C at 45°), the observed “blocked at C” fraction is about 50% when B is absent, but far smaller when B is inserted—contradicting any hidden-variable model where each photon already has definite pass/fail answers for A, B, and C. The contradiction can be avoided only if interactions with one filter affect outcomes with others. Entanglement-based experiments remove that loophole by making the relevant measurements spacelike separated, forcing the same numerical contradiction. Therefore, either realism or locality (or both) must fail, as captured by Bell inequalities.

Why does the three-filter setup create a contradiction for hidden-variable theories?

What role does the Venn diagram play in the Bell-style argument?

How does the “interaction loophole” undermine the first (single-photon) contradiction?

How do entangled photons close the interaction loophole?

What do “realism” and “locality” mean in this context?

What are Bell inequalities, and why do they matter?

Review Questions

- In the three-filter arrangement (A at 0°, B at 22.5°, C at 45°), which measured probabilities force the Venn-diagram counting contradiction, and why can’t a single fixed assignment of pass/fail outcomes satisfy them?

- What specific assumption about how filter interactions work does the entanglement experiment target, and how does spacelike separation make that loophole implausible?

- How do the definitions of realism and locality map onto the conclusion of Bell’s theorem, and what does the entangled data imply about at least one of them?

Key Points

- 1

Polarizing filters convert photon polarization into probabilistic transmission outcomes that depend on the angle between filter axes.

- 2

At 22.5°, a photon that passes one filter has about an 85% chance to pass a second filter at that relative angle, which drives the later counting contradiction.

- 3

With three filters (A, B, C at 0°, 22.5°, 45°), the observed “blocked at C” fraction is about 50% when B is absent but much smaller when B is inserted.

- 4

A hidden-variable model that assigns each photon definite pass/fail answers for A, B, and C cannot reproduce all the required conditional probabilities simultaneously.

- 5

The interaction-loophole—where passing one filter could change later behavior—can be challenged by using entangled photons measured at distant locations at the same time.

- 6

Entanglement-based tests imply that either realism or locality (or both) must fail, as formalized by Bell inequalities.

- 7

Loophole-free experimental confirmation arrived in 2015, after years of tightening source/detector conditions to prevent alternative explanations.