Brain Criticality - Optimizing Neural Computations

Based on Artem Kirsanov's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Second-order phase transitions produce a continuous change in an order parameter while creating a critical point with distinctive long-range correlations and scale-free behavior.

Briefing

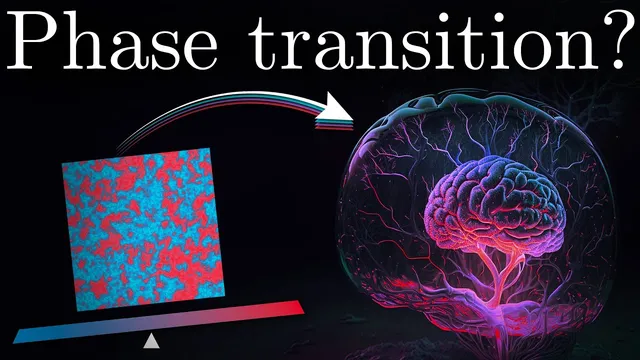

The core claim behind “brain criticality” is that neural networks operate near a second-order phase transition—an edge-of-instability regime where activity becomes scale-free and correlations spread unusually far. That matters because the same critical tuning is predicted to maximize how effectively the brain transmits information, balancing responsiveness to inputs against runaway excitation.

The explanation starts with phase transitions in physics. In everyday first-order transitions like boiling, a system jumps between phases with a discontinuous change in an order parameter. Second-order transitions behave differently: the order parameter changes continuously, yet the system develops a special “critical point” where intermediate behavior emerges. At that point, latent heat disappears (in the water example, the liquid–gas boundary becomes a supercritical fluid), and the system shows properties that don’t depend on a single characteristic scale.

To make the idea concrete, the transcript uses the Ising model, a lattice of spins that can be +1 or −1. Neighboring spins prefer to align (captured by an interaction energy proportional to the negative product of spins), while temperature adds stochastic fluctuations via the Boltzmann distribution. At low temperature, alignment dominates and magnetization is high; at high temperature, randomness dominates and magnetization collapses. Near the critical temperature, neither tendency wins outright. The result is a peak in dynamic correlation: spins fluctuate in coordinated ways, and the correlation length grows sharply, meaning distant parts of the system move together more than they do away from criticality.

Criticality also produces scale-free structure. Snapshots of the Ising lattice look statistically similar across zoom levels, and cluster-size statistics follow a power law rather than an exponential. In the transcript’s example, the probability of observing a cluster of size x versus 2x changes by a constant factor independent of x, which is the hallmark of scale invariance. In log-log coordinates, power laws appear as straight lines, and many critical observables share this kind of scaling.

The neuroscience bridge comes from experiments on “neuronal avalanches.” In 2003, John Beggs and Dietmar Plenz reported that spontaneous activity in rat somatosensory cortex neuron cultures—recorded with an 8 by 8 electrode grid—appears in cascades separated by quiescent periods. When avalanches are defined by duration and size (number of electrodes crossing a threshold, sometimes including amplitude), their distributions follow power laws, implying no characteristic scale and suggesting proximity to a second-order phase transition. Similar power-law avalanche behavior has since been reported across species and recording modalities, from single neurons to EEG.

To map physics concepts onto neural dynamics, the transcript reframes the system as a branching process: each active neuron probabilistically activates downstream neurons, plus a small chance of spontaneous activation. A single control parameter, the branching ratio σ (the sum of outgoing transmission probabilities, equal to the average number of descendants per active ancestor), determines the regime. For σ < 1, activity dies out; for σ > 1, activity amplifies and can resemble epileptiform runaway; at σ = 1, activity neither decays nor explodes on average, yet avalanche sizes and durations remain power-law distributed. In real brains, σ is shaped by the balance of excitation and inhibition, and pharmacologically blocking inhibition disrupts the normal power-law pattern toward supercritical behavior, while blocking excitation pushes toward subcritical dynamics.

Finally, the transcript argues that critical tuning improves information transmission. In a simplified “guessing game,” outputs are uninformative when activity dies out (subcritical) and uninformative when activity saturates (supercritical). At σ = 1, output activity most reliably reflects input activity, producing a peak in an information-transmission measure analogous to the correlation peak at the Ising critical temperature. The overall picture is that hovering near criticality may be an evolved strategy to maximize computational capability while avoiding both silence and runaway excitation.

Cornell Notes

Neural criticality proposes that brain networks operate near a second-order phase transition, where activity becomes scale-free and correlations spread over long distances. Physics intuition comes from the Ising model: temperature drives a continuous transition from ordered alignment to disordered randomness, with a critical point where dynamic correlation peaks and correlation length grows. In neuroscience, “neuronal avalanches” show power-law distributions in size and duration, consistent with scale invariance and proximity to a critical regime. A branching model captures the same idea using a control parameter—the branching ratio σ—where σ < 1 leads to dying activity, σ > 1 leads to runaway amplification, and σ = 1 yields power-law avalanches and maximal information transmission. The balance of excitation and inhibition is presented as the mechanism that tunes σ toward this critical point.

What distinguishes a second-order (continuous) phase transition from a first-order (discontinuous) one, and why does the “critical point” matter?

How does the Ising model generate long-distance communication from only local interactions?

Why are power laws treated as evidence of scale invariance rather than just another statistical pattern?

What experimental signature links neural activity to criticality?

How does the branching model translate criticality into a single tunable parameter?

Why would operating near σ = 1 improve information processing?

Review Questions

- How do correlation length and dynamic correlation behave as temperature (or σ) approaches the critical point, and what mechanism produces that peak?

- What specific features of neuronal avalanches (as defined in the transcript) support the claim of scale invariance?

- In the branching model, what changes when σ moves from below 1 to above 1, and how does that relate to excitation–inhibition balance?

Key Points

- 1

Second-order phase transitions produce a continuous change in an order parameter while creating a critical point with distinctive long-range correlations and scale-free behavior.

- 2

In the Ising model, temperature drives a shift from ordered alignment to disordered randomness, with dynamic correlation peaking at the critical temperature and correlation length growing sharply.

- 3

Power-law cluster-size distributions signal scale invariance: probability ratios like P(2x)/P(x) remain constant across scales, unlike exponential distributions.

- 4

Neuronal avalanches—cascades of threshold-crossing activity separated by quiescence—show power-law distributions in size and duration, consistent with brain networks operating near a second-order transition.

- 5

A branching model captures neural criticality using the branching ratio σ (average descendants per active neuron): σ < 1 leads to decay, σ > 1 leads to runaway amplification, and σ = 1 yields critical avalanches.

- 6

Excitation–inhibition balance is presented as the biological mechanism that tunes σ; blocking inhibition shifts dynamics toward supercritical behavior, while blocking excitation shifts toward subcritical behavior.

- 7

At criticality, information transmission is predicted to be optimized because outputs neither vanish (subcritical) nor saturate (supercritical), making output activity more informative about inputs.