Can We Build an Artificial Hippocampus?

Based on Artem Kirsanov's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

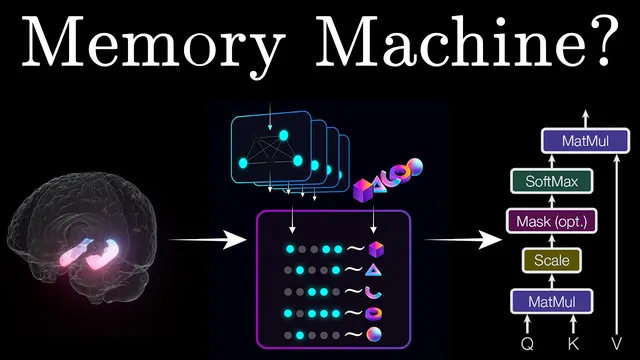

TEM frames navigation as next-observation prediction from action–observation sequences, with learning driven by prediction-error minimization.

Briefing

Artificial hippocampus–style intelligence hinges on a simple but powerful idea: learn to predict the next sensory event by factoring experience into reusable spatial structure plus a memory that binds “where” to “what.” The Tolman–Eichenbaum machine (TEM) framework described here treats navigation as a sequence-prediction problem—observations arrive alongside actions, and the system trains to minimize prediction error. Because the training data come from structured environments, the most efficient way to reduce error is to infer the underlying relational rules of the world, not just memorize transitions. That pressure to discover structure is what enables flexible generalization.

The model is built from two core components inspired by the entorhinal cortex and hippocampus. A position module, analogous to medial entorhinal inputs, performs path integration: it updates an internal “location belief” using actions alone, without directly receiving sensory observations. A memory module then binds this positional state to incoming sensory inputs, storing associations like “at X, I saw Y.” Crucially, the memory is associative: partial cues can retrieve full content. Provide position and it reconstructs the likely sensory observation; provide sensory input and it can infer the corresponding location. During prediction, the system rolls the position module forward through an action sequence, then queries memory using the resulting position to retrieve the next expected observation.

To demonstrate generalization, the transcript uses a family-tree navigation task. After training on many trees generated from shared transition rules, the position module learns to return to the same internal state after loops, effectively embedding the world’s transition logic into its update dynamics. When the model reaches the end of a sequence, it uses the path-integrated position to retrieve the correct missing sensory element from memory—without having explicitly memorized every possible edge.

Performance is contrasted with a lookup-table baseline that stores every “previous observation + action → next observation” pair. That approach requires visiting all possible combinations to reach perfect accuracy, scaling with the number of edges in the relational graph. TEM reaches high accuracy by visiting nodes rather than all edges, reflecting a learned structural representation. Internally, the model’s emergent representations mirror hippocampal and entorhinal cell types: position-module units develop periodic, grid-like firing patterns; memory-module units behave like place cells, firing for specific conjunctions of location and sensory context. Representations remap across environments, and the transcript emphasizes that grid and place-like structure was not hard-coded—random parameters optimized under prediction pressure produced it.

The framework also adapts when sensory statistics change to resemble real animal behavior. Training with boundary-seeking and object-approach biases yields boundary cells, object-vector-like responses, and even landmark-like selectivity. In more complex alternation tasks, the model learns latent rules about reward timing and develops splitter-cell-like activity modulated by both position and future turn direction.

Finally, the model helps reinterpret hippocampal remapping. Place-cell remapping is not purely random in this account: because place-like units are conjunctions of sensory and structural inputs, grid-cell structure constrains where place fields shift. The transcript notes that a predicted correlation between grid–place alignment across environments matches experimental findings in real brains.

In short, TEM offers a unified computational story for how factorized spatial structure and associative memory can produce rapid abstraction, biologically plausible representations, and testable links between entorhinal grid dynamics and hippocampal remapping—plus a claimed connection to transformer architectures via a modified TEM variant.

Cornell Notes

The Tolman–Eichenbaum machine (TEM) frames navigation as next-step prediction from sequences of actions and observations. It learns world structure by minimizing prediction error, using a factorized architecture: a position module performs path integration from actions to estimate location, while a memory module binds that location to sensory inputs and supports associative recall from partial cues. After training on many structured environments, the system can predict unseen transitions by retrieving the correct “what” for a path-integrated “where,” without memorizing every edge in the state graph. Internally, it develops grid-like periodic activity in the position module and place-like conjunction activity in the memory module, including hippocampal remapping across contexts. Changing training statistics to match animal behavior yields boundary, object-vector, landmark, and splitter-like representations, and it constrains remapping via grid–place alignment correlations seen in real data.

Why does TEM treat navigation as a prediction problem instead of a direct mapping from state to action or reward?

How do the position module and memory module work together to produce correct next-observation predictions?

What is the key difference between TEM and a lookup-table approach in terms of what must be experienced to reach high accuracy?

What emergent neural-like representations arise inside TEM, and how are they linked to hippocampal and entorhinal cell types?

How does changing the statistics of sensory experience affect the representations TEM learns?

What does TEM suggest about why hippocampal place-cell remapping is not entirely random?

Review Questions

- How does path integration in the position module enable prediction when the final sensory observation is missing from the sequence?

- Why does TEM’s accuracy scale more with visited nodes than with visited edges, and what does that imply about learned structure?

- What evidence in the transcript links grid-like and place-like representations to constrained remapping rather than purely random drift?

Key Points

- 1

TEM frames navigation as next-observation prediction from action–observation sequences, with learning driven by prediction-error minimization.

- 2

A factorized architecture separates “where” (position module using actions and path integration) from “what” (memory module binding position to sensory inputs).

- 3

Associative memory lets the model retrieve sensory content from partial cues, enabling prediction via a path-integrated location query.

- 4

Compared with lookup tables, TEM reaches high accuracy without memorizing every possible transition edge, because it learns relational structure.

- 5

Emergent internal representations resemble entorhinal grid-like activity and hippocampal place-cell conjunctions, including remapping across environments.

- 6

When training data reflect animal-like behavior, TEM develops boundary, object-vector, landmark-like, and splitter-cell-like selectivity and learns latent task rules.

- 7

TEM links remapping to grid–place alignment: place-field shifts across environments are constrained by underlying grid structure rather than drifting randomly.