Deploying My First AI AGENT in Production!

Based on All About AI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Claude 3 Haiku is used to automate YouTube comment replies by combining a 200k context window with prompt-based “in-context training,” avoiding fine-tuning.

Briefing

A low-cost AI model with a massive context window is powering an automated YouTube “reply agent” that answers comments in a specific creator’s voice—without fine-tuning. The setup relies on Claude 3 Haiku (referred to as “clae tree hu”), paired with “in-context training”: a prompt stuffed with dozens of real comment/response examples plus the video’s transcript, so the model can learn the style and content boundaries from the context alone.

The practical pitch is cost and speed. Claude 3 Haiku is described as having a 200k context window and being “super fast” and “super cheap,” priced at about a quarter of a dollar per million input tokens. In the creator’s tests, each response uses roughly 8,247 input tokens and produces about 50–100 output tokens, landing at an estimated ~$0.02153 per comment (about $2.15 per 1,000 comments). That’s positioned as dramatically cheaper than using GPT-4-class options or Claude 3 Opus, while still delivering higher-quality, longer-context answers.

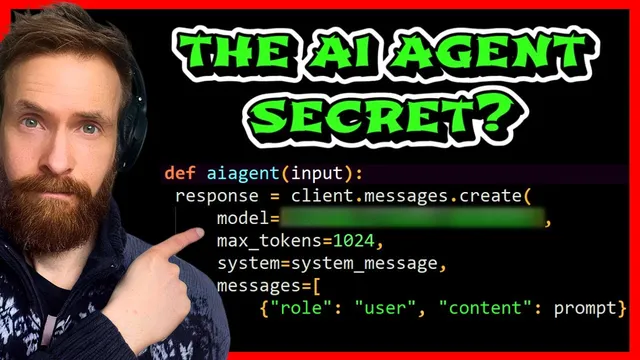

The workflow is straightforward and production-oriented. New YouTube comments are pulled via the YouTube API into a Python service, which then sends the comment—along with the preloaded in-context material—to Claude 3 Haiku. The model’s reply is returned back to YouTube through the API. To avoid quota issues, the system checks for new comments every three minutes, meaning commenters should receive a response within that window.

The “in-context training” itself is the core mechanism. Instead of fine-tuning or retrieval-augmented generation in the classic sense, the agent builds a large prompt from a context file containing: (1) a system instruction to mimic the creator’s commenting style, (2) 40+ real example pairs of user comments and the creator’s answers, and (3) the full video transcript generated from the creator’s own content. When a new comment arrives, the prompt instructs the model to give a short but strong answer in lower case and to avoid restating the user’s question too much—both to save tokens and to keep the voice consistent.

A live example shows the agent responding to a comment about Nvidia’s latest GTC notes, producing a coherent answer even though the creator claims it didn’t have direct information about Nvidia Blackwell shipments. In another test, a user asked about the biggest challenge in building the system and how to become a member to access the code; the agent replied with a challenge centered on compute/storage for saving “inner monologue thoughts,” and directed membership access via the link in the description.

The transcript-to-context step is also emphasized. The creator uses Whisper to transcribe an MP3 version of the video, then feeds that transcript into the context file so the agent can answer questions grounded in the specific video content. Overall, the takeaway is that a carefully constructed prompt—real examples plus transcript plus style constraints—can deliver a production-ready, creator-specific commenting assistant at a price point that makes high-volume automation feasible.

Cornell Notes

Claude 3 Haiku is used to run a production-style YouTube comment responder by combining a large 200k context window with “in-context training.” Instead of fine-tuning, the system builds a prompt containing a system instruction to mimic the creator’s voice, 40+ real comment/answer example pairs, and the full video transcript (generated with Whisper). Incoming comments are fetched via the YouTube API, sent to Claude 3 Haiku with the preloaded context, and the generated reply is posted back through the API. Cost is estimated at about $0.02153 per comment (roughly $2.15 per 1,000 comments) using ~8,247 input tokens and 50–100 output tokens per response, making the approach practical for frequent automation.

How does “in-context training” replace fine-tuning in this setup?

What role does the video transcript play in answer quality?

How is the system integrated with YouTube in production?

Why is Claude 3 Haiku positioned as cost-effective for high-volume commenting?

What constraints are used to keep replies in the creator’s style and within token limits?

What challenge appears when scaling this kind of agent?

Review Questions

- What specific elements are included in the prompt context file, and how does each one influence the final reply?

- How does the polling interval and API integration affect user experience and system reliability?

- Based on the token estimates given, how would you approximate the cost impact of increasing average input tokens per comment?

Key Points

- 1

Claude 3 Haiku is used to automate YouTube comment replies by combining a 200k context window with prompt-based “in-context training,” avoiding fine-tuning.

- 2

The prompt context includes a system instruction to mimic the creator’s voice, 40+ real comment/answer example pairs, and the full video transcript.

- 3

Whisper-generated transcripts are injected into the context so answers can reference the specific content of the video being discussed.

- 4

A Python service integrates with the YouTube API to fetch new comments and post generated replies, polling roughly every three minutes to manage quotas.

- 5

Cost estimates rely on token usage: about 8,247 input tokens and 50–100 output tokens per comment, totaling roughly $0.02153 per comment (~$2.15 per 1,000).

- 6

The prompt explicitly asks for short, high-quality replies in lower case and discourages restating the user’s question to save tokens and preserve style.