Digest What You Read: Walkthrough of my process for understanding Rationality by Eliezer Yudkowsky

Based on Zsolt's Visual Personal Knowledge Management's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Understanding is framed as digestion: reading must be followed by time and repeated reprocessing, not speed.

Briefing

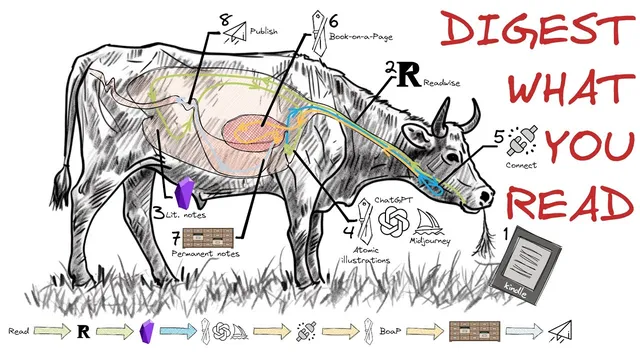

A slow, deliberate “rumination” process—turning raw reading into visual models, then into permanent notes—is presented as the antidote to information overload and today’s speed-and-productivity obsession. The core claim is that real understanding requires time for ideas to settle, be reprocessed from new angles, and be stored in a way that can be retrieved and reused later. The cow’s digestive system becomes the guiding metaphor: grass is pre-processed, regurgitated, chewed again, and only then fully digested. Likewise, reading should be followed by structured re-chewing—concept maps, chapter-level visuals, summaries, and linked notes—so knowledge becomes part of one’s durable mental system.

That workflow is framed as both a cognitive necessity and a practical response to modern “eating disorders” of information. The argument targets the productivity fetish: the belief that speed-reading, efficiency, and doing more tasks quickly automatically create value. Instead, the process is described as intentionally long and complicated because digestion—biological or informational—cannot be rushed. The workshop’s six-week structure is positioned as a guided method for this: read a difficult book, process it into a “book on a page,” create permanent notes, and present the material. The emphasis lands on rumination as the heart of the method: time plus repeated transformation of the same material.

The walkthrough then uses Eliezer Yudkowsky’s Rationality as the test case. Early confusion during chapter one is met with a concept map that distinguishes epistemic rationality (building accurate maps of reality by improving beliefs) from instrumental rationality (systematically achieving values by choosing actions that lead to desired outcomes). After importing highlights into Obsidian, the workflow shifts into progressive summarization (Thiago Forte’s approach) and chapter-by-chapter “atomic visuals”—simple diagrams meant to reframe each chapter’s ideas. Because the book is hard, external assistance is added carefully: text is copied from LessWrong, then ChatGPT is asked to produce two-sentence summaries per chapter, recommend simple SVG icons, and generate image prompts for Stable Diffusion via Midjourney. The resulting images are standardized with consistent style settings and later embedded into an organized “map of the book” that lays out the logical flow across roughly 45 chapters.

The “book on a page” and permanent notes focus on the book’s major themes: biases (like availability bias, conjunction fallacy, planning fallacy, and illusion of transparency), fake beliefs (including anticipation/proof problems, applause-seeking language, and “belief as attire”), and the mechanics of evidence. Evidence is treated as cause-and-effect-linked to what’s being inferred, with categories such as rational, historical, legal, and scientific evidence. The method also stresses experimental discipline—writing predictions before testing to reduce confirmation bias—and warns against misuse of Occam’s razor and “scientific-sounding” language without understanding. Blockers to rationality include curiosity stoppers (mysterious words, emergence-like jargon), semantic stop signs (terms that end questioning), and “guessing the teacher’s password” rather than understanding.

The closing takeaway ties the whole system back to the workshop’s purpose: build a digestible, retrievable knowledge structure through time, visuals, and reprocessing—so understanding survives beyond the initial reading and can be regenerated when needed.

Cornell Notes

The workflow described treats learning like digestion: raw input must be pre-processed, re-chewed, and only then fully absorbed. The process is built around “rumination”—time plus repeated transformation of reading into concept maps, chapter-level atomic visuals, and permanent notes in Obsidian. Eliezer Yudkowsky’s Rationality is used as the example, with early confusion handled by a concept map distinguishing epistemic rationality (accurate beliefs) from instrumental rationality (effective actions). After importing highlights, the method uses progressive summarization and chapter-by-chapter visuals, with ChatGPT helping generate concise summaries and Midjourney producing consistent image prompts. The resulting “book on a page” and note system organizes biases, fake beliefs, evidence types, and rationality blockers into a structure meant to be retrievable and usable.

Why does the cow metaphor matter for how knowledge is processed?

What’s the difference between epistemic rationality and instrumental rationality in this framework?

How does the workflow turn a hard book into something “digestible”?

What are the major categories of rationality problems highlighted from Rationality?

How does the evidence section change what “proof” should mean?

Review Questions

- How does the workflow operationalize “rumination” after reading, and what artifacts (maps, visuals, notes) are created at each stage?

- Pick one bias (e.g., availability bias or conjunction fallacy) and describe how the workflow would store and connect it to evidence and testing practices.

- What does it mean to treat evidence as a cause-and-effect chain, and how does that affect how someone should interpret “confirmation” versus “verification”?

Key Points

- 1

Understanding is framed as digestion: reading must be followed by time and repeated reprocessing, not speed.

- 2

The workflow uses “rumination” artifacts—concept maps, chapter-level atomic visuals, and linked permanent notes—to turn reading into durable knowledge.

- 3

Rationality is organized into epistemic rationality (accurate beliefs) and instrumental rationality (effective action toward values).

- 4

Biases and fake beliefs are treated as distinct obstacles, with examples like availability bias, conjunction fallacy, illusion of transparency, anticipation/proof failures, and applause-seeking language.

- 5

Evidence is defined through cause-and-effect links and categorized into rational, historical, legal, and scientific evidence, with scientific evidence requiring reproducibility.

- 6

Confirmation bias is countered by writing experimental predictions before testing, and by checking when models fail to fit data.

- 7

Curiosity stoppers and semantic stop signs (mysterious jargon, “scientific-sounding” language without understanding, and terms that end questioning) block rational inquiry.