How to use #Consensus for Research, Search Literature, Gaps, Questionnaires, and More

Based on Research With Fawad's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Consensus turns research questions into synthesized answers grounded in multiple papers, then provides references for follow-up reading and citation.

Briefing

Consensus is positioned as an AI research assistant that turns a question into a literature-backed synthesis, then helps researchers drill down with citations, filters, and follow-up prompts. The core workflow is straightforward: ask a research question, let Consensus analyze multiple papers, and use the resulting synthesis to guide what to write, what to test, and where to look next. That matters because it compresses the early stages of academic work—finding direction in the literature, identifying gaps, and assembling evidence—into a repeatable process.

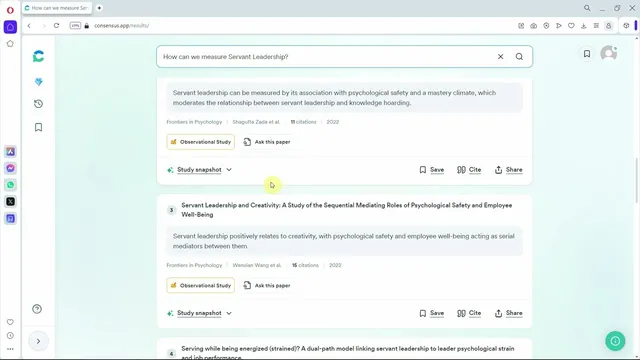

A concrete example centers on servant leadership and project success. After entering a question like “How can servant leadership impact a project success,” Consensus returns a synthesized conclusion based on ten analyzed papers, indicating a positive relationship. Crucially, it doesn’t stop at a one-line answer: it provides “key insights” tied to the relationship and supplies references that can be used directly in a literature review. The tool also supports exporting results (including paper metadata such as abstract, study type, journal, authors, and a Consensus link), which helps researchers move from synthesis to drafting with traceable sources.

Beyond summarizing established findings, Consensus is used to narrow and improve the evidence base through filtering. Researchers can restrict results by recency (e.g., selecting 2024 or switching to 2020–present when newer years yield no results), access type (open access), citation count, study type (such as systematic reviews), journal quality tiers (Q1/Q2/Q3), and subject area. This makes it easier to assemble a more defensible set of studies for systematic literature reviews and to tailor the evidence to the standards of a given research design.

Consensus also supports deeper interrogation of individual papers. For a selected study, it can summarize findings, explore methods, and identify theories used—such as Conservation of Resources Theory—so researchers can understand how relationships were developed and how constructs were operationalized. It further offers study snapshots and options to cite, save, or share results.

Where the tool becomes especially useful for original research is in gap-spotting and measurement planning. By asking for “new outcomes” or whether certain variables have been assessed with servant leadership, Consensus can surface potential constructs that appear underexplored (e.g., outcomes not previously linked to servant leadership). It can also suggest future research directions via literature review outputs and even propose other leadership styles to examine—such as participative, sustainable, or resource dilemma handling leadership—when evidence is limited.

Finally, Consensus is used for questionnaire and analysis support. Researchers can ask how to measure a construct (e.g., servant leadership or life satisfaction), then open the relevant papers to see which instruments and measurement approaches were used. It can also answer technical analysis questions, such as the minimum HTMT threshold for discriminant validity, returning a suggested value (0.85) along with supporting papers. Throughout, the emphasis remains on using AI-generated summaries as a starting point while still reading the underlying studies to ensure completeness and accuracy for academic writing and results.

Cornell Notes

Consensus is an AI research assistant that helps turn research questions into literature-based syntheses, then supports follow-up work with citations, filters, and paper-level details. In an example on servant leadership and project success, it synthesizes findings from ten papers and provides references and key insights to support a literature review. The workflow extends beyond “what is known” by using filters (year, open access, citations, study type, journal tier) and by prompting for research gaps, such as outcomes not yet assessed with servant leadership. It also helps with measurement and analysis planning by pointing to questionnaires used in prior studies and answering technical thresholds like HTMT for discriminant validity, while encouraging researchers to read the original papers.

How does Consensus help establish what the literature already says about a relationship (e.g., servant leadership → project success)?

What filtering controls make the evidence set more suitable for a systematic or high-quality literature review?

How can Consensus support theory-driven writing and method transparency in a literature review?

How does Consensus help identify research gaps and future research directions?

How can Consensus assist with measurement planning for constructs like servant leadership?

What role does Consensus play in technical analysis decisions such as discriminant validity thresholds?

Review Questions

- When building a literature review, what two things must be explained about variable relationships, and how does Consensus help supply evidence for both?

- Which filters would you use to prioritize recent, high-quality, open-access systematic reviews, and why might you adjust the year range if no results appear?

- How would you use Consensus to move from a research gap (unassessed outcomes) to a measurement plan (questionnaires/instruments) for a new study?

Key Points

- 1

Consensus turns research questions into synthesized answers grounded in multiple papers, then provides references for follow-up reading and citation.

- 2

A literature review needs both how one variable influences another and why that influence occurs; Consensus outputs key insights plus paper references to support both.

- 3

Filtering by year, open access, citation count, study type, and journal tier helps assemble a higher-quality evidence set for systematic reviews.

- 4

Paper-level prompts can extract methods and theories used (e.g., Conservation of Resources Theory), supporting more rigorous, theory-aware writing.

- 5

Consensus can identify research gaps by suggesting outcomes not yet assessed with a construct and by highlighting leadership styles with limited evidence.

- 6

For measurement, Consensus can point to questionnaires and instruments used in prior studies, but researchers should open and read the original papers to confirm details.

- 7

Consensus can provide methodological thresholds (e.g., HTMT discriminant validity at 0.85) along with supporting sources for results reporting.