How **WE** Use AI In Software Development

Based on The PrimeTime's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI coding works best when humans keep control of architecture and review AI output, especially in early codebase stages where patterns aren’t established.

Briefing

AI-assisted coding is most useful when it’s treated like a limited collaborator—good for accelerating well-bounded tasks and prototypes, but risky as a default driver for long-term, pattern-heavy codebases. Across the discussion, the biggest practical theme wasn’t whether LLMs can generate code; it was how teams decide when to delegate, how to manage quality, and what kinds of work actually benefit.

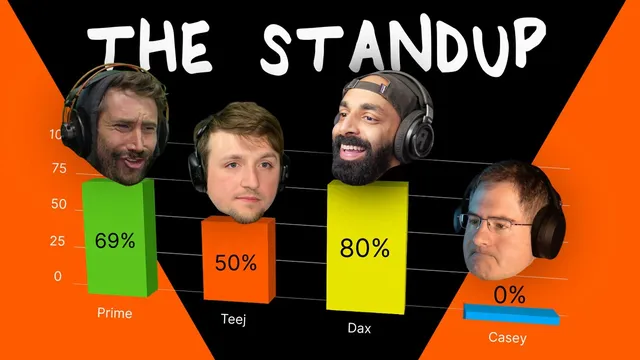

Dax describes using AI agents as a way to push productivity while still keeping humans in control. His workflow often splits a coding task into a “dumber” portion and a more complex portion: the AI handles the routine work, then he checks results before continuing. He avoids AI in early codebase stages, arguing that LLMs struggle when there’s not yet consistency in abstractions and patterns. For Open Code—his model-independent command-line agent—he says the project was largely rewritten manually to establish those patterns first. Even when AI-generated code gets discarded, he values the learning: it can surface ideas and reveal pitfalls faster than slow, manual discovery.

Casey’s stance is far more skeptical, rooted less in model capability and more in what programming is supposed to be. He argues that AI-generated web code often becomes “stacking another piece of failure” on top of already-fragile systems—especially when the underlying web platform and abstractions are poorly designed. His critique extends to maintainability, security, and performance, warning that large-scale projects can accumulate messy, hard-to-audit code. He also points to the ephemerality of web development: APIs change, deployment environments shift, and knowledge can become obsolete quickly—making AI-generated scaffolding feel like a short-lived convenience rather than durable engineering.

TJ lands in the middle, treating AI as a tool for specific productivity wins rather than a replacement for engineering judgment. He cites practical areas where LLM help is immediately valuable: clearing repetitive backlog items, adding tests, improving CI-driven workflows, and reducing manual chores like logging boilerplate or error-handling scaffolding. He also supports “vibe coding” for low-stakes, fast-moving goals—like quick prototypes or short-lived promotional sites—where throwing code away is acceptable. His estimate ranges widely (roughly 25% to 100%) depending on whether the work is exploratory or tied to long-term maintenance.

PrimeTime’s perspective emphasizes a similar split: lean into AI when the goal is to get something working quickly, but recognize an inflection point where deeper complexity demands human understanding. He describes improving his vibe-coding process by forcing the AI to propose a plan first, then iterating through corrections before code generation. He also argues that AI is best at the “weather in 10 minutes” stage—useful for near-term outcomes—while longer-horizon predictions become unreliable.

The group ultimately converges on a shared reality: marketing often overfocuses on “zero to one” demos, but most engineering effort is iterative work on existing systems. AI can still change the day-to-day by making prototypes cheaper and by helping teams burn down repetitive tasks—yet it doesn’t eliminate the need for strong fundamentals, careful review, and thoughtful architecture. The conversation ends with a promise to dig into what it actually takes to build a real agent, beyond simple API calls that route questions to a model.

Cornell Notes

The discussion treats AI coding as a delegation problem, not a magic replacement for engineering. Dax uses AI for bounded “dumber” tasks while keeping humans responsible for architecture and early codebase pattern-setting; he avoids AI-driven foundations and values learning even when generated code is thrown away. Casey rejects AI coding for many web contexts, arguing it often produces large, messy outputs on top of already-bad abstractions, raising concerns about maintainability, security, and performance. TJ and PrimeTime describe a practical middle ground: AI helps most with repetitive work, tests, and logging, and vibe coding can be effective for low-stakes prototypes, but long-term projects still require deep human understanding. The key takeaway is that AI’s best ROI comes from iterative, well-scoped use with review—not blind full automation.

When does AI coding become genuinely useful rather than just “code generation”?

Why do some participants avoid AI in the early stages of a project?

What’s the strongest critique of AI coding in web development, and what does it imply?

How do the panelists justify “vibe coding” or throwing code away?

What practical workflow improvements help make AI output more reliable?

Why does the conversation keep returning to “iteration on existing codebases” instead of only “zero to one”?

Review Questions

- Which tasks did Dax say he would delegate to AI, and what did he avoid delegating—especially early in a codebase?

- How do Casey’s concerns about web abstractions and API churn shape his view of AI-generated code?

- What workflow does PrimeTime use to improve AI reliability (planning vs direct generation), and how does that relate to the “inflection point” idea?

Key Points

- 1

AI coding works best when humans keep control of architecture and review AI output, especially in early codebase stages where patterns aren’t established.

- 2

A common workflow is splitting tasks into routine and complex parts: AI handles the routine work, then developers verify and integrate the results.

- 3

AI can reduce the cost of prototyping, making it easier to explore ideas quickly and discard prototypes when they fail validation.

- 4

Skepticism in web development centers on maintainability and the “stacking failure” problem—large AI outputs built on top of fragile abstractions.

- 5

AI’s strongest near-term value is in scoped, repetitive engineering tasks such as tests, logging boilerplate, and backlog cleanup with CI support.

- 6

Marketing tends to overemphasize zero-to-one demos; practical impact is more likely in iterative maintenance and incremental improvements to existing systems.