Hybrid Search RAG With Langchain And Pinecone Vector DB

Based on Krish Naik's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Hybrid search improves RAG retrieval by combining dense semantic similarity with sparse keyword matching instead of relying on one method alone.

Briefing

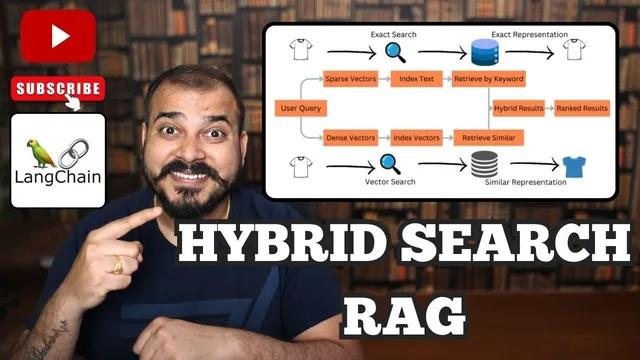

Hybrid search for RAG is built on a simple but powerful idea: retrieve relevant chunks using both semantic similarity (dense vector search) and exact/keyword matching (sparse vector search), then merge the two ranked lists into a single, better set of results. That matters because dense retrieval can miss exact terms, while keyword retrieval can miss meaning; combining both improves recall and relevance before the retrieved text is fed into an LLM for final summarization.

In the usual RAG pipeline, documents get split into chunks, each chunk is converted into an embedding vector, and those vectors are stored in a vector database. When a user asks a question, the query is embedded and a dense similarity search (often cosine similarity) returns the top-K most similar chunks. Those chunks are then combined with a prompt template and passed to an LLM to generate the answer.

Hybrid search keeps the dense-vector path but adds a parallel sparse-vector path. Semantic search corresponds to dense vectors: the text becomes dense embeddings where similarity is computed via vector metrics (the transcript mentions cosine similarity as the conceptual basis). Syntactic search corresponds to sparse representations: text is converted into sparse matrices using methods like one-hot encoding, bag-of-words, or TF-IDF. In that sparse space, only a small subset of terms is non-zero, making it well-suited for exact keyword matching.

Operationally, hybrid search stores both representations for the same documents in a vector store that supports both modes. On query time, the user question is transformed into two forms as well: sparse vectors for keyword search and dense vectors for semantic search. Each mode returns its own top-K results—often with different ordering and overlap. The remaining challenge is how to combine them.

The transcript uses Reciprocal Rank Fusion (RRF) to merge the two ranked lists. Each candidate document receives a score computed as a sum of terms of the form 1 / (C + rank), where C is a constant chosen by the system (the transcript notes values can range roughly from 1 to 60, with examples like 10, 20, or 60). Documents that rank highly in either semantic or keyword retrieval get boosted, and changing weightage between the two retrieval signals can reorder the final top-K output.

After laying out the theory, the walkthrough implements hybrid search with LangChain and Pinecone. Pinecone is used as the vector database, with a hybrid-capable retriever from LangChain that performs both dense and sparse retrieval. The setup includes creating a Pinecone index with vector dimension 384 (matching the Hugging Face sentence-transformer model used), choosing a metric compatible with the sparse/dense setup (the transcript specifies dot product), and deploying on AWS us-east-1.

For dense embeddings, the implementation uses Hugging Face embeddings via the sentence-transformers model all-MiniLM-L6-v2. For sparse retrieval, it uses a BM25 encoder (TF-IDF-based by default in the transcript) to generate sparse representations. A Pinecone hybrid search retriever is then created using both the dense embedding function and the BM25 sparse encoder, tied to the Pinecone index.

Finally, sample sentences about visiting cities across years are inserted into the index. Queries like “with what city did I visit last?” and “city did I visit first” return the correct year-to-city mapping, demonstrating that the retrieval step benefits from both semantic similarity and keyword/sparse matching before the LLM produces the response.

Cornell Notes

Hybrid search for RAG retrieves document chunks using two parallel signals: dense semantic similarity and sparse keyword matching. Text chunks are stored in a vector database in both dense-vector form (embeddings) and sparse-matrix form (e.g., TF-IDF/BM25). At query time, the question is converted into both representations, producing two top-K ranked lists. Reciprocal Rank Fusion (RRF) merges those lists by scoring candidates with terms like 1/(C+rank), boosting documents that rank well in either list. This approach improves relevance compared with using only dense or only keyword retrieval.

The implementation uses LangChain with Pinecone: a Pinecone index is created with dimension 384, dense embeddings come from Hugging Face all-MiniLM-L6-v2, and sparse retrieval uses a BM25 encoder (TF-IDF-based default). A hybrid retriever combines both retrieval modes and successfully answers “first/last visited” queries from inserted city-year sentences.

How does dense (semantic) retrieval differ from sparse (keyword) retrieval in hybrid search?

What does it mean to store both dense vectors and sparse matrices for the same documents?

How does Reciprocal Rank Fusion (RRF) combine two ranked lists?

Why does weightage between semantic and keyword results matter?

What concrete components are used in the LangChain + Pinecone implementation?

How does the example query demonstrate hybrid retrieval?

Review Questions

- When would sparse keyword retrieval outperform dense semantic retrieval in a RAG system, and why?

- Explain how RRF’s scoring formula uses rank positions from two retrieval lists to produce a single final ranking.

- In the Pinecone + LangChain setup, what must match between the embedding model and the Pinecone index configuration (e.g., dimension), and what happens if they don’t?

Key Points

- 1

Hybrid search improves RAG retrieval by combining dense semantic similarity with sparse keyword matching instead of relying on one method alone.

- 2

Sparse representations are typically built with TF-IDF/BM25-style methods, producing mostly zeros with non-zero weights for terms present in text.

- 3

Dense representations come from embedding models and support semantic similarity search (e.g., via cosine similarity as the conceptual basis).

- 4

Hybrid search requires merging two top-K ranked lists; Reciprocal Rank Fusion (RRF) scores candidates using 1/(C+rank) terms from each list.

- 5

Changing the weightage between semantic and keyword signals can reorder the final top-K results and affect which chunks reach the LLM.

- 6

In the LangChain + Pinecone implementation, a Pinecone index is created with dimension 384 to match the Hugging Face all-MiniLM-L6-v2 embedding output.

- 7

A LangChain Pinecone hybrid search retriever combines Hugging Face dense embeddings with a BM25 (TF-IDF-based default) sparse encoder to answer queries from inserted text.