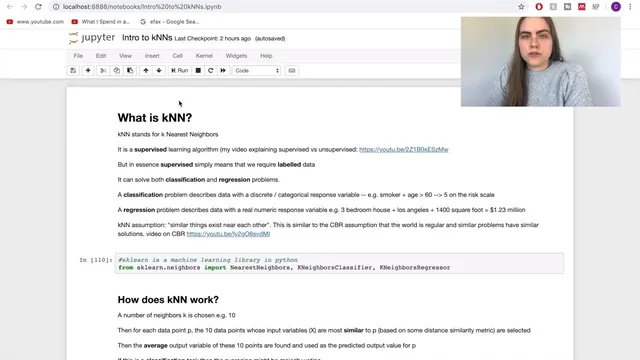

Introduction to kNN: k Nearest Neighbors Classification and Regression in Python Using scikit-learn

Based on Ciara Feely's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

kNN is a supervised, case-based reasoning approach that predicts by finding the k closest training points in feature space.

Briefing

k-Nearest Neighbors (kNN) is presented as a practical, case-based reasoning method: predictions come from the closest training examples in feature space, using their labels to vote (classification) or to aggregate (regression). The core idea is straightforward—if two data points look similar in their inputs, they should behave similarly in their outputs—so the algorithm repeatedly measures distance between a query point and all training points, selects the k nearest neighbors, then derives the output from those neighbors. In Python, scikit-learn provides ready-made implementations such as KNeighborsClassifier and KNeighborsRegressor, letting users focus on how to apply kNN correctly rather than re-implementing the math.

The walkthrough starts by framing kNN as a supervised learning approach, distinguishing classification from regression. Classification handles discrete outputs (for example, yes/no outcomes like whether a patient develops lung cancer), while regression targets continuous values (like housing prices). For classification, the transcript describes majority voting among the k nearest neighbors—e.g., if 7 of the 10 neighbors vote for class “1” and 3 vote for class “0,” the predicted class is “1.” For regression, it describes aggregating neighbor targets using the mean or median, with an option to weight neighbors by distance so closer points influence the prediction more.

A first example uses the Iris dataset to demonstrate classification. The features are continuous (sepal length, sepal width, petal length, petal width), and the labels map each flower into one of three classes. Rather than applying kNN blindly, the transcript emphasizes the need for proper evaluation to avoid misleading results from overfitting. It explains the basic split into training and testing sets, introduces the idea of a validation set for tuning, and then moves to cross-validation—specifically k-fold cross-validation—as a more robust way to estimate performance. Using cross-validation with 5 folds, it computes metrics including mean squared error (MSE) and R² (with the note that higher R² is better). It then varies k across a range (1 to 50) and plots error versus k to choose a reasonable neighborhood size; in this Iris setup, k=10 emerges as a strong starting point.

A second, more challenging example switches to regression with the Boston housing dataset. Here, the target is continuous (housing prices), and the transcript reports a much worse baseline: mean squared error is high and R² is low, indicating the model is underperforming. It again searches over k values, finding k=10 best among the tested options, but the performance remains inadequate. The key fix is feature scaling: because input columns differ widely in magnitude, standardizing features with StandardScaler inside a scikit-learn Pipeline improves results substantially. After scaling, the mean squared error drops and R² improves, while the best k remains roughly similar.

Finally, the transcript highlights evaluation discipline: when comparing models, cross-validation splits should be consistent, using a fixed KFold configuration (e.g., random_state=0) so improvements aren’t artifacts of different data partitions. It closes by pointing to next steps beyond kNN tuning—feature selection, interaction/polynomial feature ideas, and residual analysis—suggesting that model quality should be judged not only by MSE or R² but also by how errors behave across predictions.

Cornell Notes

k-Nearest Neighbors (kNN) predicts outputs by looking at the k most similar training points in feature space. Similarity is measured with a distance metric (commonly Euclidean or Manhattan), and scikit-learn provides KNeighborsClassifier for classification (majority vote) and KNeighborsRegressor for regression (mean/median, optionally distance-weighted). Proper evaluation matters: the transcript uses training/testing splits and then k-fold cross-validation (5 folds) to reduce overfitting risk and get more reliable performance estimates. On Iris classification, kNN performs well with k around 10. On Boston housing regression, performance improves dramatically only after adding feature scaling via StandardScaler in a Pipeline, underscoring that kNN is sensitive to feature magnitudes.

How does kNN turn “similar inputs” into a prediction for classification versus regression?

Why does evaluation strategy (train/test split vs validation vs cross-validation) matter for kNN?

What metrics are used to judge performance in the examples, and what direction is “better”?

Why did kNN struggle on Boston housing regression before scaling, even when k was tuned?

How does adding StandardScaler inside a Pipeline change the regression results?

What does it mean to compare models using the same cross-validation splits?

Review Questions

- In classification with kNN, what rule converts the k nearest neighbors into a single predicted label?

- Why can feature scaling be essential for kNN regression, and where should scaling be applied in scikit-learn workflows?

- When using k-fold cross-validation to compare kNN settings, what risk arises if the folds change between experiments?

Key Points

- 1

kNN is a supervised, case-based reasoning approach that predicts by finding the k closest training points in feature space.

- 2

Classification uses majority voting among the k nearest neighbors; regression aggregates neighbor target values (mean/median) and can use distance weighting.

- 3

Cross-validation (k-fold, such as 5 folds) provides a more reliable performance estimate than a single train/test split and helps detect overfitting.

- 4

kNN performance can hinge on the choice of k; sweeping k across a range and plotting error versus k helps select a starting value.

- 5

For regression on datasets with differently scaled features (like Boston housing), StandardScaler plus a Pipeline can dramatically improve MSE and R².

- 6

When comparing models, keep cross-validation splits consistent (e.g., fixed KFold random_state) so improvements reflect modeling changes, not different data partitions.

- 7

Beyond MSE and R², residual analysis is important to spot error patterns that metrics alone may hide.