Lecture 04: Data Management (FSDL 2022)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Treat dataset work—exploration, cleaning, augmentation, and labeling—as the primary path to performance gains, not just model architecture changes.

Briefing

Data management is the hidden driver of machine-learning performance: spending far more time on data than on models—especially on dataset quality, labeling, and repeatable preprocessing—often yields the biggest gains. The core message is practical: explore your data aggressively (roughly an order of magnitude more time than you’d spend on model tinkering), then improve outcomes by fixing, adding, or augmenting the training data rather than chasing architecture changes.

The lecture breaks data work into a pipeline of decisions, starting with where data comes from and how it lands near the GPU. Sources range from images and text files to logs and database records, but training typically requires copying data onto a local file system or fast storage close to the compute. The fundamentals are file systems, object storage, and databases. A file system treats data as unversioned files that can be overwritten or deleted, with performance varying dramatically—from slow spinning disks to fast NVMe SSDs. Object storage (e.g., S3) shifts the abstraction from “files” to “objects,” typically adding versioning and redundancy at the service level, trading some speed for durability and scalability. Databases handle structured metadata and relationships; binary payloads (like images or audio) should live in object storage, while databases store URLs or references to those binaries.

The talk emphasizes that storage choices should match access patterns. For analytics, data warehouses use OLAP-style processing: column-oriented layouts that compress well and speed up queries like “average comment length over the last 30 days.” For transactional workloads, OLTP systems are row-oriented. Data lakes sit alongside these systems for unstructured aggregation, often using ELT-style flows—extract and load first, transform later—while the industry trend moves toward unifying structured and unstructured data.

Once data is stored, the “language of data” is mostly SQL, with Python data frames (especially pandas) as the workhorse for code-based manipulation. When performance matters, the lecture points to accelerated alternatives: parallelized data-frame libraries and GPU-focused tools like RAPIDS.

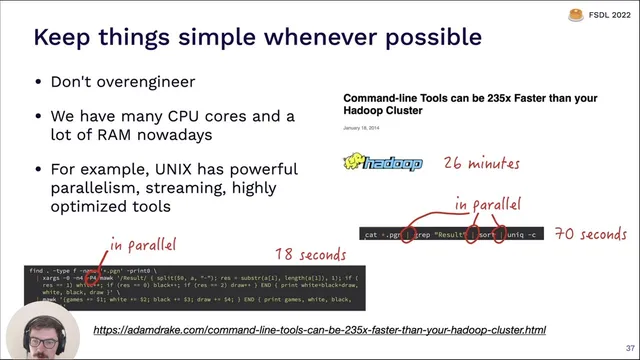

Operationally, data processing becomes a scheduling problem. A motivating example describes a nightly training job for a photo popularity predictor, where outputs depend on database queries, log-derived feature computation, and running classifiers. Workflow managers like Airflow can express these dependencies as DAGs, restart failed tasks, and distribute work across machines; Prefect and Dagster are presented as modern alternatives. The lecture also warns against over-engineering: sometimes a well-written parallel Unix pipeline beats a heavyweight distributed framework.

For training consistency and efficiency, feature stores help ensure that the same feature engineering logic used offline during training matches what’s served online during inference, while avoiding recomputation. The lecture then moves to concrete dataset sources (notably Hugging Face datasets and common formats like Parquet, plus image-text datasets and speech corpora) and to labeling strategies.

Labeling is treated as a spectrum: self-supervised learning can reduce or eliminate manual labels; data augmentation can sometimes substitute for labels; synthetic data can provide “free” ground truth; and user feedback can create a data flywheel. When labels are necessary, quality depends on clear rulebooks, careful quality assurance, and choosing labeling tools or services (Scale, Labelbox, Label Studio, and others). Finally, data versioning is framed as essential for reproducibility: unversioned data makes deployed models effectively unversioned, while snapshotting, git-style versioning with Git LFS, and DVC provide increasing levels of rigor.

The lecture closes by noting that privacy-preserving training—federated learning, differential privacy, and learning on encrypted data—remains an active research area without widely reliable off-the-shelf solutions. The overall takeaway is straightforward: treat data as a first-class engineering asset, and performance improvements will follow.

Cornell Notes

Machine-learning gains often come less from model tweaks and more from data work: deeper exploration, better dataset construction, and consistent preprocessing. Data should be stored and accessed according to workload—binary payloads in object storage, metadata in databases, and analytics in column-oriented warehouses or lakes for unstructured aggregation. After storage comes data manipulation (SQL and pandas, with accelerated options when needed) and reliable orchestration (DAG-based workflow tools) so dependent preprocessing steps run correctly at scale. For training efficiency and consistency, feature stores align offline training features with online inference. Labeling and data versioning complete the loop: use self-supervision and augmentation when possible, apply clear labeling rules with quality control when not, and version datasets so model performance can be reproduced and rolled back.

Why does the lecture treat data exploration as a performance lever, not a preliminary chore?

How should binary data, metadata, and relationships be stored for training?

What distinguishes OLAP/OLTP and why does that matter for choosing storage systems?

What role do workflow managers play in data preprocessing for training?

When is a feature store worth using?

How does the lecture recommend approaching labeling and data versioning together?

Review Questions

- What storage choice would you make for large binary files versus labels and how would you connect them during training?

- Describe how a DAG-based workflow manager helps ensure correctness in a multi-step dataset build for nightly training.

- What problems arise when training data and labels are not versioned, and what tools or approaches can mitigate them?

Key Points

- 1

Treat dataset work—exploration, cleaning, augmentation, and labeling—as the primary path to performance gains, not just model architecture changes.

- 2

Plan storage around access patterns: keep binary payloads in object storage and store references/metadata in a database.

- 3

Use SQL and data-frame workflows for structured data, and switch to accelerated options (parallel or GPU-based) when pandas becomes a bottleneck.

- 4

Make preprocessing repeatable with workflow orchestration (DAGs) so dependent steps run in the right order and failures are recoverable.

- 5

Use feature stores when offline training features must match online inference and when recomputation costs are high.

- 6

Adopt a labeling strategy that starts with self-supervision and augmentation, then adds manual labeling with strict rulebooks and quality assurance when necessary.

- 7

Version datasets (not just code) so model performance can be reproduced and rolled back as data changes.