Lecture 08: ML Teams and Project Management (FSDL 2022)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

ML product success depends on team structure, hiring specificity, probabilistic planning, and product design for failure—not on model performance alone.

Briefing

Machine-learning product teams face a structural problem: ML adds uncertainty, scarce talent, and stakeholder misunderstanding on top of the usual challenges of building software products. The core takeaway is that success depends less on any single model technique and more on how roles are organized, how hiring is targeted, how timelines are managed under uncertainty, and how products are designed to match users’ expectations to what models can actually do in production.

The lecture breaks down the interdisciplinary staffing needed to ship ML systems. Common roles include ML product managers (prioritize work with business, users, and stakeholders; produce design docs, wireframes, and work plans using tools like Jira and Notion), ML Ops/ML platform teams (build shared infrastructure and tooling to deploy and scale models, often integrating with AWS, Kafka, and vendor tooling), ML engineers (own the end-to-end lifecycle of a model in production—training, packaging, deployment, and ongoing maintenance; they use tools such as TensorFlow and Docker), ML researchers (focus on trained models and prototypes that may not yet be production-critical, often delivering reports or code repos and using tools like Jupyter notebooks), and data scientists (a catch-all that can mean analytics for business questions or overlap with ML research/engineering depending on the organization).

Hiring is treated as a high-stakes design choice. The “unicorn ML engineer” job description—requiring PhDs, years of TensorFlow, publications, and large-scale distributed systems experience—rarely matches real candidates. Instead, the lecture recommends being specific about what matters for the role: for many ML engineering needs, software engineering strength is the primary filter, with enough ML background to learn and operate effectively. For ML researchers, it argues for prioritizing quality over quantity of publications (judging the creativity and applicability of one or two strong works rather than counting papers), and for looking for candidates with an independent sense of what problems matter—sometimes using adjacent-field backgrounds as a signal. It also emphasizes sourcing beyond standard channels: tracking first authors of promising papers, recruiting from high-quality re-implementations, and leveraging conferences.

Team structure is framed as a maturity curve. At the “nascent/ad hoc” stage, ML work is low-hanging fruit but suffers from weak infrastructure, difficulty retaining talent, and limited leadership buy-in. In an “ML R&D” stage, ML sits in research, often with prototypes and long-term bets, but can get stuck due to data access problems and weak translation into business value. “Embedded ML in product teams” improves feedback loops and business impact but can create resource and hiring friction and conflicts with software delivery norms. A “centralized ML function” boosts talent density, data/compute access, and tooling investment, but introduces handoff friction to production. The end goal is an “ML-first organization,” where centralized expertise supports line-of-business teams that deliver quick wins.

Managing ML projects and expectations is especially difficult because progress is non-linear and timelines are probabilistic. The lecture recommends probabilistic project planning—assigning probabilities to task completion, running alternative approaches in parallel when prerequisites unlock multiple paths, and avoiding critical paths that assume research will succeed. It also stresses cultural alignment between research and engineering, frequent quick wins, and educating leadership on ML’s probabilistic nature and why accuracy metrics alone don’t communicate business outcomes.

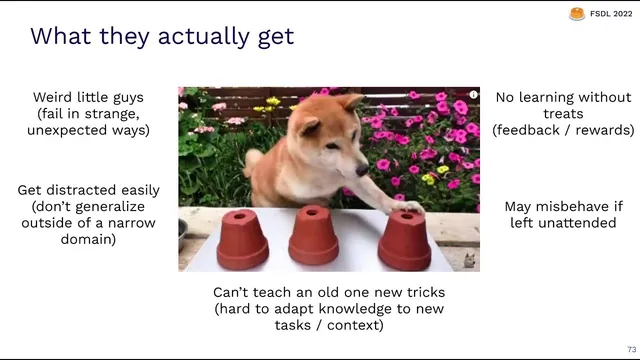

Finally, product design must bridge the gap between user mental models and model reality. The lecture uses a “trained dog” analogy: ML systems can solve hard puzzles but fail in strange ways, generalize narrowly, and need feedback and guardrails. Good ML product design therefore explains benefits and limitations, supports human-in-the-loop control, uses confidence-based fallbacks, and builds feedback loops—ranging from implicit behavioral signals to explicit thumbs up/down and, when feasible, user correction inside the workflow—to continuously improve the system after deployment.

Cornell Notes

Machine-learning product success hinges on organization and process, not just model quality. The lecture maps out roles (ML PM, ML Ops/platform, ML engineers, ML researchers, data scientists), argues for targeted hiring over “unicorn” profiles, and shows how team structure evolves from ad hoc ML to an ML-first organization. ML projects demand probabilistic planning because progress is non-linear and research-like work can fail; timelines and success criteria must reflect that uncertainty. On the product side, users often expect superhuman intelligence, but ML behaves more like a trained system with failure modes—so products must add guardrails, human-in-the-loop options, and feedback loops that improve models using real user signals.

How do ML roles differ in what they produce and what they own?

Why is the “unicorn ML engineer” hiring description often the wrong approach?

What distinguishes task ML engineers from platform ML engineers?

How should ML project planning differ from traditional software planning?

What are the main organizational archetypes for ML teams, and what trade-offs do they create?

How should ML product design bridge the gap between user expectations and model reality?

Review Questions

- Which hiring signals does the lecture recommend prioritizing for ML researchers, and why does it caution against counting publications?

- How does probabilistic project planning change how teams decide what to do next when ML progress stalls?

- Compare embedded ML teams versus centralized ML functions: what friction shifts between research, engineering, and production in each model?

Key Points

- 1

ML product success depends on team structure, hiring specificity, probabilistic planning, and product design for failure—not on model performance alone.

- 2

ML engineers typically own the full production lifecycle (training, packaging, deployment, maintenance), while ML Ops/platform teams focus on shared infrastructure and tooling.

- 3

Avoid “unicorn” ML engineer job descriptions; hire for the dominant practical skill (often software engineering for deployment-heavy roles) and require only the ML basics needed to succeed.

- 4

ML project timelines should be treated as uncertain; probabilistic planning and parallel exploration of alternatives help manage non-linear progress and research-like failure rates.

- 5

Organizational maturity matters: nascent, R&D, embedded, centralized, and ML-first structures each trade off data access, talent density, and production handoff friction.

- 6

ML product design must match user expectations to model reality using guardrails, human-in-the-loop options, confidence-based fallbacks, and clear communication of limitations.

- 7

Feedback loops—implicit, direct implicit, explicit, and user correction when feasible—are the mechanism for improving ML systems after deployment.