Lecture 09: Ethics (FSDL 2022)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Ethical disputes in tech often come from optimizing measurable proxies that don’t track the real objective, creating alignment failures.

Briefing

Ethics in tech and machine learning comes down to managing three recurring tensions—alignment failures, stakeholder trade-offs, and the need for humility—while staying grounded in real-world cases rather than abstract thought experiments. The lecture frames ethics as a practical vocabulary for describing what people find acceptable or unacceptable in specific deployments, then uses that lens to show how “good intentions” can still produce harmful outcomes when systems optimize the wrong targets, when metrics can’t capture what truly matters, and when engineers overestimate what they can safely control.

A central theme is the “proxy problem”: teams often optimize measurable surrogates (like training loss, accuracy, or short-term engagement) that only loosely correlate with the real objective (like user value, long-term welfare, or safety). That mismatch can produce unintended harm even when the model is performing well on the metric it was trained to maximize. The lecture connects this to familiar ML patterns—training/validation gaps and downstream utility losses—and to non-ML examples where planners chose what was easy to measure instead of what was important.

The second tension is trade-offs among stakeholders. Some ethical disputes arise when what one group wants conflicts with what another group wants, including people who can’t easily provide input (like future generations or those affected indirectly). The asteroid-and-orphans hypothetical is used to illustrate why these decisions are hard: engineers may be able to compute Pareto fronts for technical metrics, but they’re less equipped to quantify human rights, utility, or acceptable losses across time. That gap in expertise is why humility is presented as the “appropriate response”—a mindset that pushes teams to seek help, consult domain experts, and treat their own assumptions as provisional.

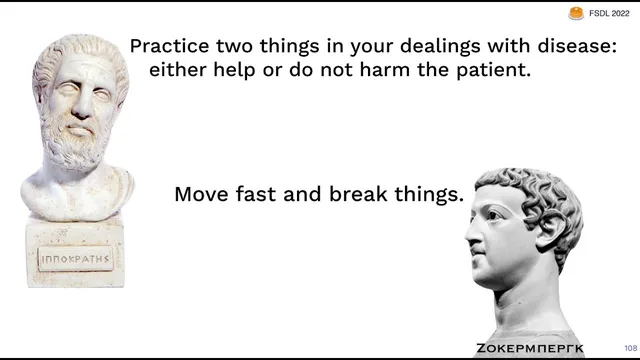

The lecture then applies these ideas to concrete tech controversies. Public trust in large tech companies has eroded amid scandals involving government interfaces, disinformation, and manipulative content systems. It argues the industry should borrow from professional ethics cultures in other fields—especially engineering traditions like Canada’s engineer oath and the human-subjects research framework shaped by historical abuses and codified in regulations such as the Helsinki Declaration and the U.S. 1973 research act.

It also highlights ethical concerns that scale with business incentives: carbon emissions from compute, deceptive “dark patterns,” and growth hacking tactics that boost short-term metrics while degrading user goodwill. In machine learning specifically, ethics becomes more urgent because models touch human lives more directly, are wrong in probabilistic ways, and involve many stakeholders. Four recurring questions anchor the discussion: whether a model is fair, whether it’s accountable, who owns the data, and whether the system should be built at all.

The Compass criminal-justice risk scoring example illustrates how fairness definitions can conflict and how equalizing one metric can still increase harm for a subgroup. The lecture argues that sometimes the right ethical move is not “fix the model,” but “question whether the model should exist,” especially when systems are proprietary, uninterpretable, and trained on proxies that reflect biased institutions.

Finally, the lecture extends ethics to AI’s frontier: hype and capability overclaims (“AI snake oil”) can mislead users and trigger backlash, while longer-term risks include self-improving systems and alignment failures framed through Bostrom’s “astronomical waste” and the paperclip maximizer thought experiment. The closing message is not only about avoiding harm: it points to medicine and responsible ML practices—like auditing, failure-mode analysis, and clinical-trial style standards—as evidence that ethics can be operationalized, and that ML tools can also reduce suffering and expand access to benefits when built with care.

Cornell Notes

The lecture argues that tech ethics is best handled through concrete cases and three recurring tensions: alignment failures (optimizing proxies that don’t match real goals), stakeholder trade-offs (including harms to people who can’t easily consent), and humility (recognizing limits and seeking expertise). It uses examples from automated weapons, criminal-justice risk scoring (Compass), dark patterns, carbon emissions, and data governance to show how “ethical” outcomes can fail even when teams start with good intentions. In machine learning, ethics intensifies because models are probabilistic, touch human lives directly, and involve many stakeholders. The Compass case illustrates how fairness metrics can be incompatible and how equalizing one measure can still increase false positives for Black defendants. The lecture concludes that ethics should also be operational—through accountability, auditing, and user feedback—rather than purely philosophical.

What is the “proxy problem,” and why does it matter for ethical outcomes in machine learning?

Why do fairness disputes persist even when teams try to “equalize bias” from the start?

What does humility mean in this ethics framework, and how should it change engineering behavior?

How do dark patterns and growth hacking create ethical risk even when they boost short-term metrics?

Why does the lecture argue that sometimes the ethical answer is “don’t build it,” not “fix it”?

What lessons does medicine offer to machine learning ethics?

Review Questions

- Which ethical tensions—alignment, trade-offs, and humility—show up in the proxy problem and how would you detect them in a new ML product?

- In the Compass example, how can calibration across groups still produce unequal harm, and what does that imply about choosing fairness metrics?

- What does “accountability” mean for ML systems when interpretability methods are unreliable or easily fooled?

Key Points

- 1

Ethical disputes in tech often come from optimizing measurable proxies that don’t track the real objective, creating alignment failures.

- 2

Stakeholder trade-offs are unavoidable in ethical decisions, but engineers often lack the expertise to quantify human values and acceptable losses.

- 3

Humility should be treated as an engineering requirement: seek domain experts, revisit assumptions, and incorporate user feedback rather than assuming correctness.

- 4

Public trust declines when companies repeatedly deploy manipulative or deceptive practices, so professional ethics needs to be treated as part of engineering culture.

- 5

In machine learning, fairness is not a single target; different fairness definitions can conflict, so “equalizing one metric” may still increase harm for a subgroup.

- 6

Some high-stakes ML systems should be questioned at the “should this be built at all?” stage, especially when targets are biased proxies and systems are proprietary or non-accountable.

- 7

Medicine offers concrete models for responsible ML through clinical-trial standards, auditing frameworks, and systematic failure-mode analysis.