Reflective-Reflective HOC using #SmartPLS4: Reliability, Validity, and Hypotheses Testing

Based on Research With Fawad's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Validate each lower-order reflective dimension (outer loadings, reliability > 0.70, convergent validity > 0.50) before constructing the higher-order construct.

Briefing

Reflective–reflective higher order constructs in SmartPLS4 can be handled cleanly by validating the lower-order dimensions first, then treating those validated dimensions as “items” for the higher-order construct, and only after that running hypothesis tests. The practical payoff is that reliability, convergent validity, and discriminant validity get checked at both levels—so any mediation or structural claims rest on measurement quality rather than assumptions.

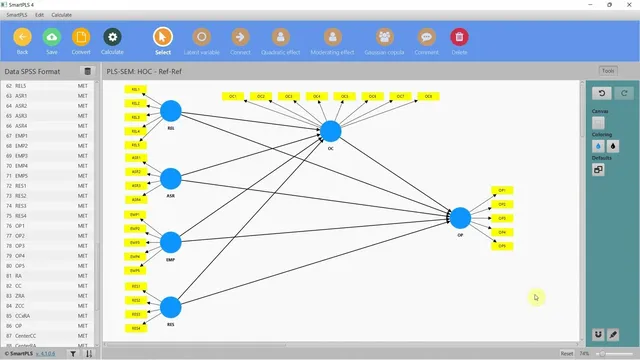

The workflow starts with a model where an independent variable is a higher order construct built from multiple reflective dimensions. In the example, “Internal Service Quality” is measured through four reflective dimensions—reliability, assurance, empathy, and responsiveness—and it influences “Organizational Performance,” with “Organizational Commitment” (or collaborative culture) acting as a mediator. Instead of combining the four dimensions into a single block immediately, each dimension is first modeled as its own lower-order construct and validated on its own.

After the lower-order constructs are specified, the next step is to run the PLS algorithm and inspect outer loadings, reliability, and validity. Items are not deleted if reliability and convergent validity are acceptable (reliability above 0.70 and convergent validity above 0.50 in the example). Discriminant validity is also checked; values slightly below 0.90 are treated as acceptable when the constructs belong to the same higher-order structure. Once all four lower-order dimensions meet the criteria, the analysis moves up one level.

To validate the higher-order construct, SmartPLS4 is used to create a higher-order reflective–reflective construct by exporting scores (creating a new model/duplicate) and then re-specifying the measurement model. The four validated lower-order constructs are removed from the structural links and reintroduced as the indicators of the higher-order construct “Internal Service Quality.” In this reflective–reflective setup, the arrows point in the correct direction for a reflective–reflective specification, and the PLS algorithm is run again.

At this stage, the higher-order construct’s measurement quality is assessed using the same reliability and validity logic. Because the lower-order constructs were already validated, the analysis focuses reporting on the higher-order construct’s reliability, convergent validity, and discriminant validity rather than re-listing every lower-order result.

With measurement quality confirmed at both levels, hypothesis testing proceeds via bootstrapping. The example uses 10,000 bootstrap samples with bias-corrected confidence intervals (One-Tailed, since the direction of relationships is known). Path coefficients are checked for significance, and mediation is evaluated through indirect effects. Significant path coefficients and significant indirect effects indicate that the hypothesized relationships—including mediation—are supported. The result is a step-by-step, measurement-first approach for reflective–reflective higher order constructs in SmartPLS4 that culminates in reliable hypothesis testing.

Cornell Notes

Reflective–reflective higher order constructs in SmartPLS4 are best handled in three stages: validate lower-order dimensions, validate the higher-order construct built from those dimensions, then test hypotheses. First, each dimension (e.g., reliability, assurance, empathy, responsiveness) is modeled as a lower-order reflective construct and assessed using outer loadings, reliability (above 0.70), convergent validity (above 0.50), and discriminant validity (with some flexibility when dimensions belong to the same higher-order construct). Next, the validated lower-order constructs are treated as indicators for the higher-order construct “Internal Service Quality,” and reliability/validity are checked again at the higher-order level. Finally, bootstrapping (10,000; bias-corrected) tests path significance and mediation via indirect effects.

Why validate lower-order dimensions before building the higher-order construct in a reflective–reflective model?

How does SmartPLS4 treat validated lower-order constructs when forming a reflective–reflective higher-order construct?

What validity checks matter at the higher-order level, and what gets reported?

How are hypotheses and mediation tested once measurement quality is confirmed?

What does “slightly low discriminant validity” mean in this workflow?

Review Questions

- In a reflective–reflective higher order model, what are the three major stages of analysis in SmartPLS4, and what is checked at each stage?

- Why might discriminant validity be interpreted differently for dimensions that belong to the same higher-order construct?

- How do bootstrapping settings (10,000 samples, bias-corrected, one-tailed) influence how mediation and path significance are evaluated?

Key Points

- 1

Validate each lower-order reflective dimension (outer loadings, reliability > 0.70, convergent validity > 0.50) before constructing the higher-order construct.

- 2

Treat the validated lower-order constructs as indicators for the higher-order reflective–reflective latent variable, ensuring arrow direction matches the reflective–reflective specification.

- 3

Run the PLS algorithm again after re-specifying the higher-order construct to confirm higher-order reliability and validity.

- 4

Report measurement results at the higher-order level once lower-order constructs are already validated, avoiding redundant reporting.

- 5

Use bootstrapping with 10,000 samples and bias-corrected intervals to test path coefficients and mediation via specific indirect effects.

- 6

Significant path coefficients and significant indirect effects together support both direct hypotheses and mediation hypotheses.