S1E3 - Types of Errors in Measurement, and Accuracy vs. Precision

Based on ChemistryNotes Videos's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Random errors are haphazard mistakes that can push results too high or too low with roughly equal likelihood.

Briefing

Measurement errors come in two distinct flavors: random errors, which scatter results in both directions, and systematic errors, which push measurements consistently too high or too low. Random errors are typically human mistakes—haphazard experimental or measurement slips that have an equal chance of producing a value that’s too large or too small, too long or too short. Systematic errors, by contrast, tend to be instrumentation-related. A balance that isn’t calibrated correctly, for example, can produce mass readings that are consistently biased in one direction, meaning every measurement lands too high (or every measurement lands too low) rather than splitting evenly above and below the true value. In shorthand, random errors are often tied to technique, while systematic errors are often tied to equipment, glassware, or other measurement tools.

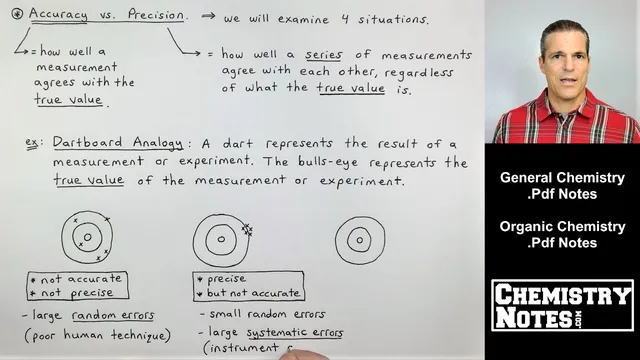

The next key distinction is between accuracy and precision—two terms that often get treated as synonyms in everyday conversation, but don’t mean the same thing in chemistry. Accuracy describes how closely a measurement agrees with the true (accepted/literature) value. Precision describes how closely a series of measurements agree with one another, regardless of whether they match the true value. A set of results can be tightly clustered (high precision) yet still be wrong (low accuracy) if a systematic error is present. Conversely, results can be spread out (low precision) yet still average to the correct value (high accuracy) if random errors dominate.

Using a dartboard analogy, the bullseye represents the true value. One dartboard shows five darts scattered widely: the results are neither accurate nor precise, reflecting large random errors and poor technique. Another dartboard shows five darts stacked together but far from the bullseye: the measurements are precise but not accurate, pointing to systematic error such as an instrument or glassware problem. A third dartboard shows darts clustered on the bullseye: the measurements are both accurate and precise, with neither random nor systematic error.

The fourth scenario addresses a common quiz question: accuracy without precision. Here, one dart lands on the bullseye, but the other darts are dispersed. The average of the measurements still hits the true value, so accuracy is present, yet precision is missing because the individual results don’t agree closely with each other. This pattern is uncommon but possible.

A concrete lab example follows: a student measures calcium content four times, obtaining 14.92, 14.91, 14.88, and 14.91. The results are tightly grouped in the high-14s, indicating precision. However, the true calcium amount in the sample is 16.25, so the measurements are far from the accepted value, indicating low accuracy. The conclusion is that something in the process is consistently biased—consistent with systematic error—rather than the student simply making random mistakes. The discussion then transitions toward significant figures in the next section, where measurement reporting and calculation details will be handled more rigorously.

Cornell Notes

Random errors and systematic errors are the two main categories of measurement error. Random errors are haphazard human mistakes that can push results too high or too low with roughly equal likelihood, while systematic errors are consistent biases usually caused by instrumentation or equipment problems. Accuracy means agreement with the true (accepted) value; precision means agreement among repeated measurements. Dartboard scenarios illustrate all four combinations: neither accurate nor precise, precise but not accurate, both accurate and precise, and the rare case of accurate without precision. A calcium-content example shows tightly clustered results around 14.9% that are precise but not accurate because the true value is 16.25%.

How do random errors differ from systematic errors, and what kinds of causes are most associated with each?

What is the operational difference between accuracy and precision in chemistry measurements?

What does it mean to be precise but not accurate, and what error type does that suggest?

How can someone be accurate without being precise?

In the calcium example (14.92, 14.91, 14.88, 14.91 vs. true 16.25), what conclusion follows about accuracy and precision?

Review Questions

- Give an example of a situation that would produce high precision but low accuracy. What kind of error is most likely responsible?

- Describe accuracy without precision using the dartboard analogy. What must be true about the average of the measurements?

- In the calcium measurement scenario, why can’t precision alone guarantee accuracy?

Key Points

- 1

Random errors are haphazard mistakes that can push results too high or too low with roughly equal likelihood.

- 2

Systematic errors are consistent biases, often caused by instrumentation or equipment problems like an uncalibrated balance.

- 3

Accuracy measures agreement with the true (accepted/literature) value.

- 4

Precision measures agreement among repeated measurements, regardless of the true value.

- 5

A tight cluster of results far from the true value indicates high precision but low accuracy, typically from systematic error.

- 6

Accuracy without precision can occur when random errors cancel out so the average matches the true value.

- 7

The calcium example shows precision without accuracy: clustered results around ~14.9% versus a true value of 16.25% calcium.