Search Strategy for Systematic Literature Review—S3

Based on Research With Fawad's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

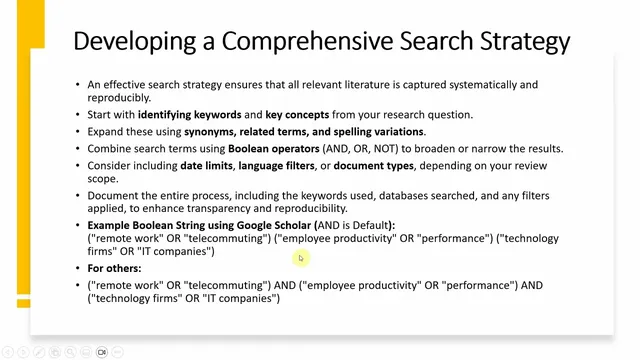

Build the initial keyword set directly from the research question’s concepts, then expand it with synonyms, related terms, and spelling variations.

Briefing

A strong systematic literature review search strategy hinges on building a transparent, reproducible query—starting from the research question and then iteratively testing how keyword choices and database filters change the results. The process begins by extracting the core concepts from the question and turning them into a keyword set that includes synonyms, related terms, and spelling variations. For corporate social responsibility (CSR), that means pairing “corporate social responsibility” with interchangeable labels such as “social responsibility,” “business social responsibility,” “business sustainability,” and “corporate social performance,” while also accounting for variations like “behavior” vs. “behaviour” and “organizational” vs. “organisational.” These variations matter because relevant studies may use different terminology even when they address the same underlying construct.

Once the keyword universe is defined, the strategy uses Boolean operators—AND, OR, and NOT—to control breadth. OR expands coverage by capturing alternative terms, while AND narrows results to papers that combine concepts, and NOT can exclude irrelevant themes. The approach also incorporates practical limits aligned with the review scope, such as date ranges (e.g., last 5 years or since 2020), language filters (e.g., English-only), and document-type filters. Crucially, every decision needs documentation: which keywords were used, which databases were searched, what filters were applied, and how many results appeared at each stage. Tracking changes—such as how results shift when switching keywords, databases, or filters—supports transparency and reproducibility, and it can be summarized in a table showing what changed and what effect it had.

Search strings are then tailored to specific databases. In Google Scholar, the default behavior often treats AND as the default operator, so queries can be built by combining concept blocks with AND and using OR to capture term variants. The transcript illustrates this with a CSR question focused on antecedents and outcomes: the query can require CSR-related terms in the title, then add OR-linked antecedent/outcome-related mechanisms such as “impact,” “role,” “mediation,” or “moderation,” and optionally restrict the time window. For other platforms like Web of Science or Scopus, the same logic is applied but the string structure is adapted to the database’s syntax.

When access to major indexes is limited, the strategy can still be strengthened by cross-checking journal eligibility. One workaround described is using the Master Journal List (associated with Web of Science) to decide whether journals found via Google Scholar should be included; for example, a journal like “Administrative Sciences” can be included if it appears in the list, while a journal not found there can be skipped. Database selection also depends on discipline: business and management reviews may prioritize Scopus or Web of Science (and Business Source Complete), social sciences can use PsycINFO and Sociological Abstracts, and health sciences can use PubMed. Gray literature—reports, dissertations, and conference proceedings—can be avoided to reduce publication bias.

Finally, searching stops when the strategy proves effective. Effectiveness is indicated when results include major studies and recommended sources, and when quick checks of retrieved papers confirm they meet inclusion criteria. Additional “snowballing” steps help catch misses: scanning the reference lists of included papers and using citation tools like “cited by” to find newer studies that build on them. The overall goal is not just to retrieve papers, but to do it in a way that can be audited, repeated, and justified in the review’s methodology.

Cornell Notes

A systematic literature review search strategy starts with translating the research question into a controlled set of keywords and concepts, then expands that set with synonyms, related terms, and spelling variations. Boolean logic (OR to broaden, AND to narrow, NOT to exclude) is used to build database-specific search strings, often combined with scope limits like date range, language, and document type. Every change—keywords, filters, databases, and result counts—should be recorded to make the search transparent and reproducible. When access to major databases is limited, journal eligibility can be checked via the Master Journal List. Searching can end once major studies appear and inclusion criteria are confirmed, with reference-list and “cited by” checks used to reduce the chance of missing key work.

How should keywords be generated from a systematic review research question, and why do spelling variations matter?

What role do Boolean operators play in controlling the size and focus of search results?

Why is it necessary to document result counts and changes during search development?

How can a reviewer strengthen journal selection when Scopus or Web of Science access is unavailable?

What “stop rules” indicate that the search strategy is working?

How do reference-list and citation (“cited by”) checks help reduce missed studies?

Review Questions

- If a CSR study uses “corporate social performance” instead of “corporate social responsibility,” what parts of the search strategy should capture it?

- How would you design a Boolean query to require both a CSR concept term and an antecedent/outcome mechanism term?

- What specific records should be kept to make a search strategy reproducible in a systematic review methodology section?

Key Points

- 1

Build the initial keyword set directly from the research question’s concepts, then expand it with synonyms, related terms, and spelling variations.

- 2

Use OR to broaden within a concept and AND to narrow across concepts; apply NOT to exclude clearly irrelevant themes.

- 3

Apply scope limits aligned to the review (date range, language, document type) and treat them as adjustable parameters.

- 4

Record every search decision—keywords, databases, filters, result counts, and changes—so the methodology can be audited and repeated.

- 5

Tailor search strings to each database’s syntax (e.g., Google Scholar vs. Web of Science/Scopus).

- 6

When major indexes aren’t available, verify journal eligibility using the Master Journal List before including papers found via Google Scholar.

- 7

Stop searching when major studies and recommended sources appear and retrieved papers match inclusion criteria; use reference lists and “cited by” to catch misses.