Solving Wordle using information theory

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Model each Wordle feedback pattern as a partition of candidate answers, then score guesses by the expected information gained from that partition.

Briefing

Wordle can be treated as a problem in information theory: each color pattern (green/yellow/gray) functions like a noisy “measurement” that reduces uncertainty about the hidden five-letter word. Building an optimal solver becomes a matter of choosing guesses that maximize the expected information gained—quantified using entropy—so the remaining candidate set shrinks as efficiently as possible. The practical payoff is a strategy that performs well in simulations and explains why certain opening words tend to work better than others.

The approach starts with the game mechanics: a guess yields a pattern describing which letters appear and whether they match the correct positions. With roughly 13,000 valid guess words but only about 2,300 curated answer words, the solver’s job is to pick guesses that best narrow the answer space within six attempts. Early on, the method assumes all candidate answers are equally likely. For any proposed guess, it enumerates every possible feedback pattern and computes (1) the probability of each pattern occurring and (2) the information content of each pattern, measured in bits. Patterns that are likely to occur (like “all grays”) carry little information; rare patterns that split the candidate set sharply carry more. The solver then selects the guess with the highest expected information, i.e., the entropy of the pattern distribution.

This “version 1” solver repeatedly applies the same logic: after each feedback pattern, it restricts the candidate set to the words consistent with that pattern, then chooses the next guess that maximizes expected entropy over the reduced set. In a uniform-probability world, entropy initially behaves like a fancy way to count how many candidates remain—because all consistent words are treated as equally likely. Simulations across all possible Wordle answers yield an average score around 4.124, which is respectable but leaves room for improvement, especially for the jump from an average of four guesses to maximizing three-guess wins.

The key upgrade is replacing uniform assumptions with a probability model based on English word frequencies. Using frequency data (from Mathematica’s word frequency function, sourced from Google Books Ngram), the solver assigns higher prior probability to common words and lower probability to obscure ones. A sigmoid cutoff turns raw frequency ranks into a smoother “likely vs unlikely” probability distribution, reflecting the intuition that very rare words should not dominate uncertainty even if they remain technically possible. With non-uniform probabilities, entropy no longer matches the raw count of remaining candidates; instead it reflects effective uncertainty, where many “extra” candidates contribute little because the model deems them unlikely.

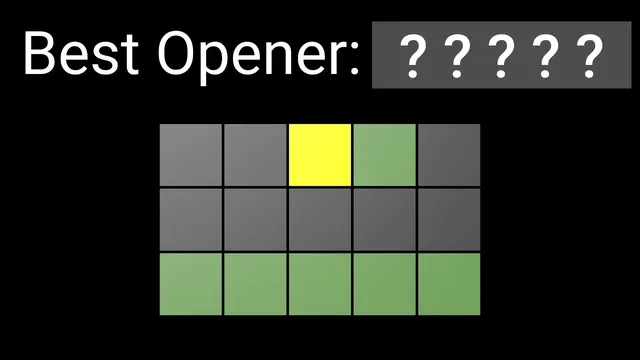

A further refinement targets expected performance rather than pure information gain. After estimating how likely each candidate answer is, the solver links remaining uncertainty (in bits) to expected number of additional guesses using empirical regression from prior simulations. This “version 2” strategy improves average performance to about 3.6. Incorporating the true Wordle answer list as a prior can push performance slightly further (around 3.43), and a deeper two-step lookahead suggests “crane” is the best opener in that framework. The overall conclusion is that no method can reliably guarantee an average near three guesses without enough room to extract information in only two moves—Wordle’s constraints prevent that kind of perfect third-guess certainty.

Cornell Notes

Wordle feedback patterns can be treated as information that reduces uncertainty about the hidden answer. A solver can score candidate guesses by computing the expected information gained (entropy in bits) from the distribution of possible color patterns. In a first version, all remaining candidate answers are assumed equally likely, so entropy roughly tracks the number of remaining possibilities. Performance improves when the solver uses a non-uniform prior based on English word frequencies, so unlikely candidates contribute less to uncertainty. A final refinement estimates expected remaining guesses from the current uncertainty, improving average scores in simulations to around 3.6.

How does a Wordle guess translate into “information” measured in bits?

Why does maximizing entropy help, and why do “all grays” patterns tend to be poor?

What changes when the solver stops assuming all candidate answers are equally likely?

How does the solver connect “bits of uncertainty” to the expected number of remaining guesses?

What performance results come from the different solver versions?

Why can’t an algorithm guarantee an average near three guesses?

Review Questions

- In the entropy-based scoring, what is the relationship between a pattern’s probability and its information value?

- How does a non-uniform prior (word frequency) change what entropy means compared with the uniform-prior case?

- What is the difference between choosing the next guess by maximizing expected information versus maximizing expected game score?

Key Points

- 1

Model each Wordle feedback pattern as a partition of candidate answers, then score guesses by the expected information gained from that partition.

- 2

Compute information in bits using −log2(p), and compute a guess’s quality as the expected value of that quantity over all possible feedback patterns.

- 3

Uniform priors make entropy roughly equivalent to counting remaining candidates, but frequency-based priors make entropy reflect effective uncertainty instead of raw set size.

- 4

A frequency-informed prior can be built from English word frequencies (e.g., via Google Books Ngram data) and converted into probabilities using a sigmoid-style cutoff.

- 5

Improving beyond entropy-only selection requires mapping remaining uncertainty (bits) to expected remaining guesses, using simulation data and regression.

- 6

Even with optimal lookahead, Wordle’s feedback structure limits how much uncertainty can be removed in two moves, preventing consistently solving in three guesses.