Studying Scaling Laws for Transformer Architecture … | Shola Oyedele | OpenAI Scholars Demo Day 2021

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Scaling laws link language modeling loss to training compute, but the loss–compute relationship can shift across transformer architectures and training objectives.

Briefing

Scaling laws for language models can forecast how loss improves with compute, but it’s unclear whether those relationships hold across different transformer architectures and training objectives. Shola Oyedele’s work tests that assumption by fitting scaling-law curves across multiple transformer variants—decoder-only and encoder-style models—then comparing how the “loss vs. compute” trade-off changes when architecture and training setup change. The practical payoff is cost: if one architecture consistently delivers better loss for the same compute budget, it becomes the most efficient choice as training budgets keep expanding.

The starting point is OpenAI’s earlier empirical scaling laws for decoder-only transformers, which link language modeling loss to three levers: model size (parameter count), dataset size, and total training compute. In that framework, compute is estimated from batch size and the number of parameter updates, with a factor accounting for forward and backward passes. Oyedele focuses on the loss–compute relationship using a power-law form (with a constant term controlling the trade-off). Results are treated as meaningfully different when they fall outside a narrow band—within about 5% is treated as a margin of uncertainty.

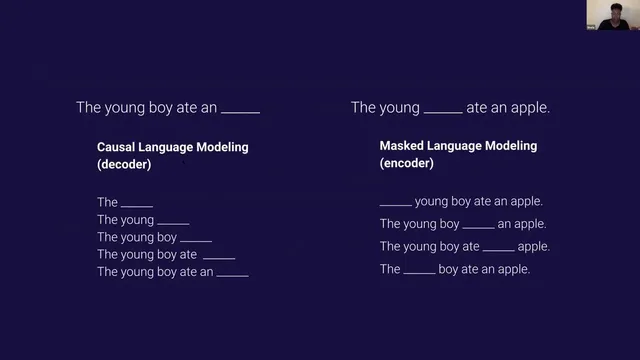

To probe generalization beyond decoder-only transformers, Oyedele studies two training regimes: causal language modeling (predicting the next token) and masked language modeling (predicting a masked word anywhere in the sentence). The same underlying transformer design can be used for both, but the training signal differs: causal models only condition on left context, while masked models learn from bidirectional context. Experiments include GPT-2 as a reference point for decoder-only behavior, Transformer-XL and Reformer as additional causal variants, and BERT in both masked and causal implementations.

A key methodological step is fitting the compute-efficient frontier—essentially the scaling-law curve—by training multiple model sizes (roughly 2 million to 350 million parameters, depending on architecture) and building learning curves over estimated compute. Preliminary comparisons reveal two notable patterns. First, identical architectures can yield different scaling-law fits when trained with different objectives; BERT’s masked training produces a different loss–compute relationship than BERT’s causal version, consistent with the shift from bidirectional to left-to-right conditioning.

Second, Reformer shows a distinctive “tiered” behavior: larger models sometimes fail to improve loss relative to smaller ones within the same tier, even though they consume more compute. Despite that inefficiency at certain sizes, Reformer still achieves some of the best overall scaling-law performance among the tested architectures. Oyedele attributes the advantage to Reformer’s design goal—reducing memory footprint and compute time—suggesting that architectural efficiency directly translates into better loss for a given compute budget.

The work also flags limitations: limited hyperparameter sweeps, reliance on loss formulations without irreducible loss, and the difficulty of making “apples-to-apples” comparisons across architectures with many confounding differences. Still, the central takeaway is clear: architecture matters for scaling-law behavior, and the most cost-effective model is the one that best bends the loss–compute curve. Oyedele’s next steps include broader hyperparameter tuning, expanding the set of transformer variants, and running more exhaustive experiments to isolate which architectural factors drive changes in scaling-law fits.

Cornell Notes

Scaling laws can predict how language modeling loss improves as training compute increases, but those relationships may shift across transformer architectures and training objectives. Shola Oyedele tests this by fitting loss–compute power laws across multiple transformer variants, including GPT-2, Transformer-XL, Reformer, and BERT in both masked and causal forms. The results show that the same architecture can produce different scaling-law curves when trained differently—BERT’s masked (bidirectional) setup differs from its causal (left-to-right) setup. Reformer stands out with strong scaling-law performance, tied to its efficiency-oriented design that reduces memory and compute. The findings matter because they help identify architectures that deliver better loss per unit compute as training budgets grow.

What does “scaling law” mean in this work, and how is compute handled in the analysis?

Why compare causal language modeling to masked language modeling when studying transformer scaling laws?

What surprising result emerges when BERT is trained in different ways?

What is the “tiered” pattern reported for Reformer, and why does it matter?

What limitations could affect the strength of the conclusions?

How does the work connect scaling laws to real-world cost decisions?

Review Questions

- How would you expect the scaling-law curve to change when switching from masked (bidirectional) to causal (left-to-right) training for the same transformer architecture?

- What does a “tiered” Pareto frontier imply about choosing model size under a fixed compute budget?

- Which methodological choices (hyperparameter sweeps, irreducible loss vs. not) could change the fitted scaling-law parameters, and how might that alter comparisons across architectures?

Key Points

- 1

Scaling laws link language modeling loss to training compute, but the loss–compute relationship can shift across transformer architectures and training objectives.

- 2

Compute is estimated from batch size and parameter update count, with forward/backward passes accounted for in the total compute budget.

- 3

Causal and masked language modeling differ in context access (left-only vs. bidirectional), and that difference can change fitted scaling-law curves even for the same architecture family.

- 4

BERT’s masked and causal implementations produce different scaling-law fits, consistent with bidirectional versus left-to-right conditioning.

- 5

Reformer shows strong overall scaling-law performance and a tiered behavior where some larger models spend more compute without improving loss within a tier.

- 6

Limited hyperparameter sweeps and cross-architecture confounds make it difficult to isolate which architectural component drives changes in the scaling-law fit.

- 7

The core practical goal is cost efficiency: choose architectures and model sizes that deliver the best loss per unit compute as training budgets grow.