Use AI To Help Build Your Second Brain in Obsidian MD (Dataview Plugin Queries Example)

Based on John Mavrick Ch.'s video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

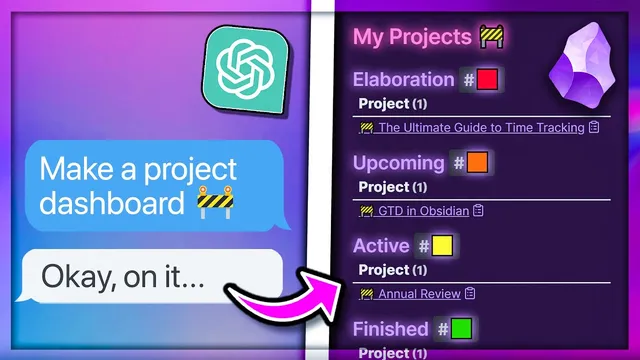

Use a dedicated DataView prompt so AI returns properly structured query code, then paste it into Obsidian inside a DataView code block.

Briefing

AI can cut the time spent wrestling with Obsidian’s DataView plugin by turning natural-language requests into working DataView query code—complete with sorting, filtering, and even computed fields. Instead of memorizing DataView’s clauses, variables, expressions, and functions, the workflow uses a purpose-built prompt (or an AI “assistant” wired to documentation notes) to generate queries that match a user’s vault structure.

The process starts with a custom prompt designed specifically for DataView query creation. It lays out what a DataView query is, lists common components (so the model knows what to output), and includes example questions with sample answers to reinforce formatting. After copying that prompt into ChatGPT, the user can ask for a query in plain terms—such as “show all my notes with the input video tag” and “for each note show the status, links, and source fields.” The model returns a DataView query that can be copied into Obsidian, with the only manual step being wrapping the result inside the proper DataView code block syntax (three backticks plus “dataView”).

From there, the generated query becomes a starting point for practical adjustments. One example changes sorting from reverse alphabetical order to “created date descending,” so the newest notes appear first. Another removes unwanted results by excluding notes from a hidden folder that may contain templates or other non-video files that still carry the same tags. The result is a cleaner “My Videos” table that shows only relevant video notes and includes the created date so the ordering can be verified.

For more advanced queries, the transcript notes that stuffing every possible DataView function, expression, and clause into a single prompt can be costly or unreliable—especially without higher-tier ChatGPT access or API usage. The workaround is to use OpenAI’s assistant capability to retrieve only the relevant documentation. The user creates an OpenAI account, generates an API key, and installs an Obsidian community plugin (“Intelligence”) that can create assistants from notes. Then, the assistant is built from a set of documentation-linked notes (covering functions, allowed expressions, and clauses). This keeps prompts shorter while still giving the AI access to the specific DataView syntax needed.

A more complex example demonstrates computed fields and limits. The assistant is asked to create a query that lists notes tagged #input videos, shows status, links, source, and a “total links” field calculated as incoming links plus outgoing links (using DataView functions like file, and length on the link lists). It also limits results to 10 notes. The final query behaves as requested: it caps the output, computes the combined link count, and the table values match the underlying note relationships.

Overall, the approach turns DataView’s steep learning curve into an iterative, copy-and-adjust workflow—first with a reusable prompt, then with a documentation-backed assistant for more complex query logic.

Cornell Notes

The workflow uses AI to generate Obsidian DataView queries from plain-language requests, avoiding the need to memorize DataView clauses, expressions, and functions. A custom prompt teaches the model what DataView queries look like and how to format outputs, enabling quick creation of tables filtered by tags like #input videos and displaying fields such as status, links, and source. After generating a basic query, the user manually adjusts it for practical needs like sorting by created date and excluding hidden folders that contain templates. For advanced queries, the transcript recommends creating an OpenAI assistant that’s fed only the relevant DataView documentation notes, reducing prompt length and cost. The assistant can also compute fields—like total links by adding incoming and outgoing link counts—and apply limits such as showing only 10 notes.

How does the workflow get AI to output valid DataView query code instead of vague text?

What are two common “post-generation” edits that make the query match real vault needs?

Why does the transcript recommend an assistant approach for complex DataView queries?

How is “total links” computed in the advanced query example?

How does the workflow enforce a maximum number of results?

Review Questions

- When generating a basic DataView table from AI, what manual step is still required before the query works in Obsidian?

- What two changes are made to improve the “My Videos” query’s usefulness (sorting and filtering), and why are they needed?

- In the advanced example, how does the query compute total links, and what DataView functions are involved?

Key Points

- 1

Use a dedicated DataView prompt so AI returns properly structured query code, then paste it into Obsidian inside a DataView code block.

- 2

Start with simple requests like filtering by a tag (e.g., #input videos) and listing fields such as status, links, and source.

- 3

Adjust AI-generated queries for real-world organization needs by sorting on created date descending and excluding hidden folders that contain templates.

- 4

For complex DataView logic, avoid bloated prompts by creating an OpenAI assistant backed by documentation notes (functions, expressions, and clauses).

- 5

Compute derived columns by instructing the assistant to use DataView functions (e.g., file + length) and arithmetic (incoming links + outgoing links).

- 6

Apply result caps by requesting a limit (e.g., show only 10 notes) so tables stay readable and fast.