We Tried Pulsetto as a Couple: The Data Will Surprise You

Based on Duddhawork's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

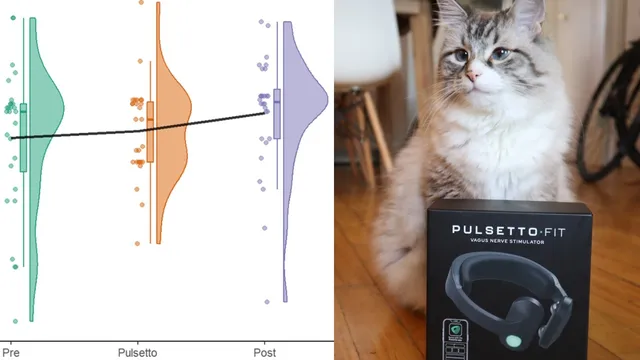

The expected “improve during use, then bounce back after stopping” pattern did not appear across multiple sleep and stress metrics.

Briefing

A two-person, four-week-before/during/four-week-after self-tracking experiment found no meaningful, consistent improvement from the Palsetto device on sleep, stress/recovery, or day-to-day mood and energy—results that largely contradict the product’s claims. The clearest pattern across metrics was stability: sleep scores, sleep efficiency, and sleep-stage breakdowns didn’t show the expected “bounce back” effect when the device was stopped. In other words, there was no reliable sign that wearing the device produced measurable benefits beyond normal life changes.

For sleep, the analysis used Fitbit and Aura Ring data collected across three periods: baseline (four weeks before), intervention (four weeks during), and follow-up (four weeks after). Time-series sleep scores showed no striking separation between the three groups. Distribution comparisons using box plots and density histograms echoed that finding: the only statistically detectable difference appeared between the baseline and post period for the narrator, but not between baseline and the intervention. The improvement was attributed to seasonal routine—better sleep during the school year than summer—rather than to the device.

Sleep efficiency, defined as the proportion of time spent asleep while in bed, also failed to move in a consistent direction for either user. The sleep-stage breakdown (awake time, light sleep, REM, and deep sleep) likewise produced little evidence of a device effect. The wife’s deep sleep showed a small change during the post period, but the timing overlapped with tapering off medication, making it difficult to credit the Palsetto. Overall, the expected signature—better sleep during use followed by a return to baseline after stopping—did not appear.

Stress and recovery metrics told a similar story. Heart rate variability (HRV), often treated as a proxy for relaxation and recovery, was not consistently higher during device use. The narrator’s HRV looked steady or improved with routine, while the wife’s HRV rose more after stopping—again suggesting other factors (like sleep quality and daily structure) rather than a direct device effect. Additional stress-related measures—Fitbit stress score, Aura/Fitbit readiness score, breathing rate per minute, and resting heart rate—showed no robust, device-linked pattern. One breathing-rate anomaly emerged: when the wife slept better in the post period, she breathed faster per minute, which the analysis treated as inconsistent with the device explanation.

Mood and energy tracking via the “How We Feel” app also failed to show a meaningful shift. Daily mood averages stayed generally positive with no clear differences across pre/during/post. Energy was relatively stable, and the emotional “landscape” on a positivity-by-energy grid barely changed.

The conclusion was blunt: buy the Palsetto? Short answer, no. The study’s limitations are acknowledged—only two participants, a short duration, and imperfect control over settings and timing, including seasonal work changes. Still, the lack of signal across multiple domains led to a practical takeaway: the device didn’t deliver measurable benefits for these two people, and the experience included downsides such as syncing/app bugs and occasional skin irritation. Even if some people find the routine pleasant, the narrator argues that free alternatives (sleep hygiene, exercise, socializing, healthy eating) are more reliable and can produce larger effects.

Cornell Notes

A two-person self-tracking test of the Palsetto (four weeks before, four weeks during, four weeks after) found no consistent, statistically meaningful improvements in sleep, stress/recovery, or mood/energy. Sleep scores, sleep efficiency, and sleep-stage time (including REM and deep sleep) did not show the expected pattern of improvement during use followed by a bounce back after stopping. Stress-related measures such as heart rate variability, Fitbit stress score, Aura/Fitbit readiness score, breathing rate, and resting heart rate also lacked a device-linked signature. Mood and energy tracked over years in the “How We Feel” app showed stable patterns across the three periods. The results matter because they challenge the device’s marketed claims using real-world wearable data, while still noting the study’s small sample size and limited control.

What “success pattern” would have supported the Palsetto’s effectiveness in this experiment?

Which sleep metrics were tested, and what did they show?

How did heart rate variability (HRV) factor into the stress/recovery results?

What other stress-related metrics were checked beyond HRV, and were they consistent with a device effect?

How were mood and energy measured, and what changed across the three periods?

What practical issues and limitations were cited when interpreting the results?

Review Questions

- If the Palsetto were working, what specific statistical/visual pattern across pre/during/post would you expect to see in sleep scores or HRV?

- Which sleep-stage metric showed the most noticeable change for the wife, and what confounding factor made it hard to attribute to the device?

- Why does an HRV spike after stopping the device weaken a causal claim that the Palsetto improved recovery?

Key Points

- 1

The expected “improve during use, then bounce back after stopping” pattern did not appear across multiple sleep and stress metrics.

- 2

Sleep score, sleep efficiency, and sleep-stage distributions showed no robust, consistent Palsetto-linked improvements for either user.

- 3

Heart rate variability did not rise in a device-tied way; the wife’s HRV increase was stronger after stopping, pointing to other factors like routine and sleep quality.

- 4

Fitbit stress score and Aura/Fitbit readiness score did not show significant pre/during/post separation that would support a direct device effect.

- 5

Mood and energy tracked via the “How We Feel” app stayed largely stable across baseline, intervention, and post periods.

- 6

The study’s small sample size and limited experimental control are real constraints, but the lack of signal across many metrics still led to a practical “no” recommendation.

- 7

Reported downsides included app syncing/session-tracking bugs and occasional skin irritation, reducing the value proposition even if some users find the routine pleasant.