AI vs ML vs DL vs Generative Ai

Based on Krish Naik's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI is defined as building applications that can perform tasks without human intervention, with examples like recommendation systems and self-driving cars.

Briefing

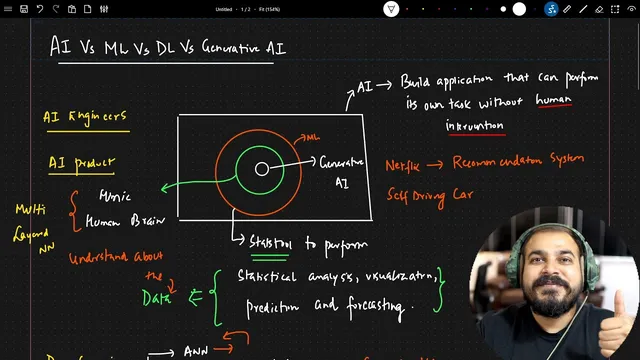

Generative AI sits at the top of a ladder that starts with AI and narrows through machine learning and deep learning—then expands again into models that can create new content. The key distinction is functional: AI is about building systems that can carry out tasks without human intervention, machine learning adds statistical tools for learning from data, deep learning uses multi-layer neural networks to mimic how humans learn, and generative AI specifically produces new text, images, or videos rather than only labeling or predicting outcomes.

At the broadest level, artificial intelligence aims to power applications that perform tasks on their own. The transcript uses Netflix’s recommendation system and self-driving cars as examples: both deliver outputs (movie recommendations or driving decisions) without requiring a person to manually intervene for each step. For AI engineers, the practical takeaway is that this work typically results in an AI product integrated into software—such as web, mobile, or even edge devices—often involving model fine-tuning and other steps to make the system scalable.

Machine learning is framed as a subset of AI. Its job is to provide statistical tools that support the data science lifecycle—starting from data ingestion and moving through transformation and feature engineering—so models can learn patterns and make predictions. The motivation is straightforward: learning from data helps make the data meaningful and usable, turning raw information into signals that can support tasks like forecasting.

Deep learning is then presented as a subset of machine learning, built to mimic the human brain’s learning process through multi-layer neural networks. While GPUs, open-source libraries, and recent hardware progress made deep learning more practical, the core idea remains neural networks with architectures suited to different data types. Three foundational components are highlighted: ANN, CNN, and RNN (plus variants). CNN is tied to computer vision tasks such as object detection, RNN variants are associated with time-series use cases, and the broader deep learning toolkit includes models like LSTM, GRU, encoder-decoder with attention, and—crucially—Transformers.

That Transformer lineage becomes the backbone for generative AI. Generative AI is described as a subset of deep learning that relies on two broad model families: discriminative models and generative models. Discriminative models focus on classification, prediction, and regression using labeled datasets. Generative models, by contrast, are trained to generate new data—new content—based on what they learned from large, often internet-scale datasets.

The transcript illustrates this with a “cats” analogy: reading many books about cats enables a person to answer questions in a new way; similarly, a generative model trained on huge amounts of text or image data can produce new responses. Large language models handle text-to-text generation, while large image models and video-capable models extend the same idea to images and video generation.

Finally, the discussion connects today’s model race to “foundation models,” also called pre-trained models. These are trained on massive, mixed data (including code and other internet content) and then adapted for specific needs via fine-tuning. Examples named include OpenAI’s GPT-4 and GPT-4 Turbo (with GPT-5 mentioned as upcoming), Anthropic’s Claude 3, Meta’s Llama 2 and Llama 3, Google’s Gemini, and Stability AI’s image-generation ecosystem. To build applications on top of these models, the transcript points to LangChain as a framework for retrieval-augmented generation and for creating chatbots and other LLM-powered applications.

Cornell Notes

AI is the broad goal of building systems that can perform tasks without human intervention. Machine learning narrows that goal by using statistical tools to learn from data across the data science lifecycle, enabling prediction and forecasting. Deep learning further narrows it by using multi-layer neural networks designed to mimic human learning, with architectures like ANN, CNN (vision/object detection), and RNN variants (time series), culminating in Transformers. Generative AI is a subset of deep learning that focuses on generating new content using generative models trained on huge datasets, unlike discriminative models that rely on labeled data for classification/regression. Foundation models (pre-trained models) are the current engine behind many LLM and multimodal systems, later adapted via fine-tuning for specific domain use cases.

How does the transcript distinguish AI from machine learning and deep learning in terms of purpose?

Why are Transformers singled out as a bridge toward generative AI?

What’s the practical difference between discriminative and generative models?

How does the “cats books” analogy map to how generative AI works?

What are foundation models, and why does fine-tuning matter?

What role does LangChain play in building LLM applications?

Review Questions

- Create a one-paragraph explanation that distinguishes discriminative vs generative models and connect it to why generative AI can create new content.

- List the three deep learning building blocks mentioned (ANN, CNN, RNN variants) and match each to the type of tasks it’s associated with in the transcript.

- Explain what foundation models are and describe how fine-tuning changes what they can do for a specific domain.

Key Points

- 1

AI is defined as building applications that can perform tasks without human intervention, with examples like recommendation systems and self-driving cars.

- 2

Machine learning is a subset of AI that uses statistical tools to support the data science lifecycle and enable prediction/forecasting.

- 3

Deep learning uses multi-layer neural networks to mimic human learning, with ANN, CNN (vision/object detection), and RNN variants (time series) as core building blocks.

- 4

Generative AI is a subset of deep learning that relies on generative models to create new text, images, or videos, unlike discriminative models that classify or regress from labeled data.

- 5

Transformers are highlighted as the backbone behind many large language model systems used for generative AI.

- 6

Foundation models (pre-trained models) are trained on huge internet-scale datasets and then adapted to specific needs through fine-tuning.

- 7

LangChain is positioned as a framework for building retrieval-augmented generation and chatbot-style applications on top of LLMs.