Using Case-Based Reasoning to Predict Marathon Performance and Recommend Tailored Training Plans

Based on Ciara Feely's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

The system predicts marathon finish time and recommends the next week of training by retrieving and reusing solutions from similar historical runners’ weekly training profiles.

Briefing

Marathon training advice is often one-size-fits-all, even though runners’ fitness, effort patterns, and goals vary widely. A case-based reasoning system built from Strava training data aims to fix that by predicting marathon finish time and recommending the next week of training using patterns from similar past runners—without requiring lab tests or prior marathon performances. The approach matters because it can give novice and recreational runners actionable guidance throughout the 12–16 weeks leading to race day, including when training is already underway.

The system is trained on more than 1.5 million training sessions from over 21,000 runners, covering the 16-week lead-up to a marathon. Each runner’s activity is converted into 100-meter pacing profiles (minutes per kilometer) with elevation information, then aggregated into weekly features such as number of sessions, total distance, mean pace, longest run, fastest/slowest 1.5 km and 10 km paces, and mean elevation gain. Cases are defined by a runner’s weekly training features plus the marathon time achieved a fixed number of weeks later, with cases separated by training week and sex to reflect physiological differences and to avoid recommending training for the wrong point in the cycle.

For race time prediction, the method retrieves the k most similar historical cases (using Euclidean distance over the weekly feature space) and averages their known marathon times. Three model variants are compared: a baseline using all features, a feature-selected model using stepwise forward selection to keep only the most predictive variables for each week, and a multi-week model that improves on earlier ensemble strategies by incorporating past predicted times as additional inputs. Across men and women, prediction error generally drops as race day approaches, but the baseline and feature-selected models show a sharp error spike around weeks one to two before the marathon—consistent with the “marathon taper,” when runners reduce or stop training and variability rises. The multi-week model largely removes that problem, delivering the best overall performance and achieving statistically significant gains (p < 0.01) about 12 weeks out compared with the other approaches.

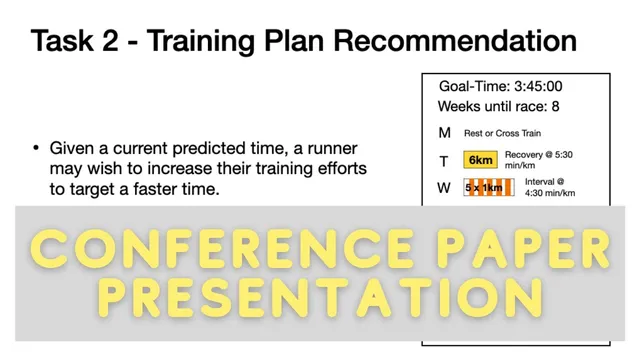

Training plan recommendation uses a different mechanism: given a runner’s current training week and an adjusted goal time (marathon time star, represented via a delta), the system filters historical runners who hit similar goal times and match the current training stage. It then selects a next-week training profile by targeting the “middle” of the retrieved set’s next-week loads—avoiding recommendations that are unrealistically hard or too easy that could result from relying on a single closest match. Evaluation uses 10-fold cross-validation on a subset of roughly 20,000 runners (focusing on marathon times under five hours for men and between three and five hours for women across Dublin, London, and New York City marathons from 2014–2017). The recommended plans show encouraging proof-of-concept behavior: aiming for faster goals (delta < 1) yields recommended weeks with faster mean weekly pace than the runner’s actual plan, while slower goals (delta > 1) produce easier pace targets.

Beyond accuracy, the system’s practical value is explainability. By showing how a runner’s recommended plan compares to the distribution of the 15 retrieved similar cases (sessions, distances, and pace metrics), it provides a traceable rationale that many prediction-only tools lack. The work concludes with plans for a live user study and future extensions—such as adding heart-rate data or using time-series methods to classify training sessions—and notes that the framework could transfer to other endurance sports if comparable training data exists.

Cornell Notes

A case-based reasoning system predicts marathon finish time and recommends the next week of training by matching a runner’s current weekly training profile to similar historical runners. The model is trained on 16 weeks of Strava-derived data (weekly features built from 100-meter pacing and elevation), with cases separated by training week and sex. For prediction, it retrieves the k nearest cases and averages their known marathon times; a multi-week variant that feeds in past predicted times outperforms single-week approaches, especially around the one-to-two-week taper period. For recommendations, it filters cases using an adjusted goal time (delta) and selects a feasible next-week training load centered around the retrieved set, avoiding overly hard or easy plans. Evaluation via 10-fold cross-validation shows lower prediction error near race day and proof-of-concept alignment between goal ambition and recommended pace difficulty.

How does the system turn raw training logs into something case-based reasoning can use?

Why does prediction error spike one to two weeks before the marathon in some models?

What changes in the multi-week model, and why does it help?

How does the training plan recommendation use a runner’s goal time?

Why not just pick the single most similar past runner’s next week of training?

What evidence supports that recommended plans match goal ambition?

Review Questions

- In what ways do the weekly feature design and the separation by training week and sex affect the validity of case retrieval and recommendations?

- How does incorporating past predicted times change the behavior of race-time prediction near the taper period compared with single-week models?

- What trade-off does the “middle value” selection strategy make when recommending next-week training, and how does it relate to feasibility for real runners?

Key Points

- 1

The system predicts marathon finish time and recommends the next week of training by retrieving and reusing solutions from similar historical runners’ weekly training profiles.

- 2

Strava data is transformed into weekly pacing and elevation features (minutes per kilometer) aggregated across 100-meter intervals, then packaged into cases tied to specific training weeks and sex.

- 3

Race-time prediction uses k-nearest-case retrieval (Euclidean distance) and averaging, with a multi-week variant that feeds past predicted times into the model.

- 4

Prediction error drops as race day nears, but single-week approaches struggle around the one-to-two-week taper window due to high variability in reduced training behavior.

- 5

Training recommendations incorporate a goal-time adjustment (delta) to decide whether the next week should be harder (delta < 1) or easier (delta > 1).

- 6

Instead of copying one closest historical runner, the method selects a feasible next-week plan centered around the retrieved set to reduce the risk of over- or under-difficulty.

- 7

Evaluation via 10-fold cross-validation shows statistically significant improvements for the multi-week predictor and goal-consistent changes in recommended pace difficulty for training plans.