Vibe Coding is For Senior Developers

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Senior engineers accept more AI-generated code because they write clearer specs, decompose tasks well, and apply stronger correctness checks during review.

Briefing

Senior developers are adopting AI coding tools faster than juniors because they can turn vague requests into high-signal prompts, break work into agent-friendly chunks, and verify outputs with stronger correctness instincts. That shift matters because it reframes “vibe coding” from a gimmick—generating code without reading it—into a practical workflow where experienced engineers treat AI like a collaborator: they specify clearly, review intelligently, and integrate results into real codebases.

The discussion starts with a surprise: prominent software figures long associated with craft and detail—people behind React, Rails, Linux, and Reddus—are increasingly embracing AI-assisted development. The reaction isn’t just “AI is cool,” but “why now, and why them?” A key explanation comes from an observation attributed to Eric at Cursor: senior engineers accept more agent output than juniors. The reasons are concrete. Seniors tend to write tighter specs with less ambiguity, decompose tasks into smaller units that can be handled independently, and bring stronger priors for correctness—so reviews are faster and more accurate. Juniors may generate lots of code, but they lack the verification heuristics to confidently greenlight it.

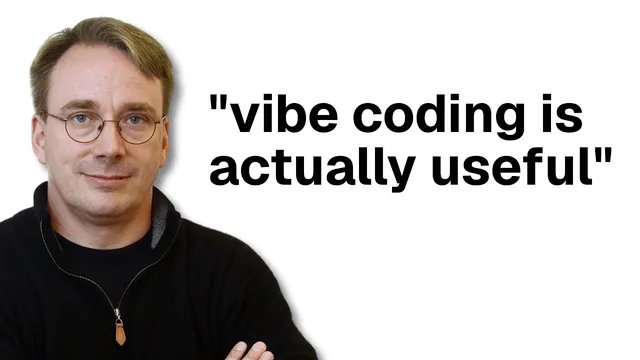

The “vibe coding” label is also challenged. Linus Torvalds is cited as saying vibe coding is acceptable only when it doesn’t affect anything that matters—specifically, when developers aren’t reading the code and are merely generating it to accomplish a task. Yet he also demonstrates the approach in practice: he used AI-assisted coding to build a Python-based audio sample visualizer inside a repository for digital audio effects, choosing to “vibe code” rather than invest time learning Python from scratch. The point isn’t that AI eliminates engineering discipline; it’s that experienced developers know when experimentation is safe and when code must be scrutinized.

Another example comes from Anti-Res (creator of Reddus), who replaced a 3,800-line C++ template library with a minimal pure C implementation. The change is documented with testing and review details: the new code was written by Claude Code using Opus 4.5, tested carefully (including comparisons against the original implementation), and reviewed independently by Codex GPT 5.2. The result: faster builds and fewer steps. This is presented as evidence that AI can produce production-grade contributions when paired with rigorous verification.

From there, the argument widens into career mechanics. The gap between junior and senior isn’t just coding ability; it’s capability plus clarity. Seniors communicate better about what they’re building and why, and they’re more capable of delegation and orchestration—skills that become central when work is distributed across agents or teammates. Staff-level roles add even more orchestration: scaling scope, managing parallel work, and coordinating execution.

The takeaway is that AI doesn’t just speed up typing code—it pushes engineers to think like managers and systems designers. It can help teams ship more by reducing the time spent on low-value implementation details, but it won’t replicate ownership: AI won’t automatically remember why something broke or fix mistakes the way a human owner would. The future, the argument concludes, belongs to developers who improve their communication, chunking, and verification habits—because those are the skills that make AI output trustworthy and scalable.

Cornell Notes

AI coding tools are being embraced most by senior engineers because they can produce clear, low-ambiguity prompts, decompose tasks into agent-friendly units, and apply stronger correctness checks. That combination leads to higher acceptance of agent output, faster reviews, and safer integration into real codebases. “Vibe coding” is treated as situational: it may be acceptable for non-critical experiments, but production work still requires reading, testing, and ownership. Examples include Linus Torvalds using AI to build a Python visualizer when Python isn’t his strength, and Anti-Res replacing a large C++ library with a smaller C implementation using Claude Code and Codex GPT with explicit testing and review. The broader career claim is that engineering success increasingly depends on clarity, delegation, and orchestration—not just individual coding speed.

Why do senior engineers accept more AI agent output than juniors?

How does “vibe coding” differ from using AI as a real engineering collaborator?

What do the examples of Linus Torvalds and Anti-Res illustrate about safe AI use?

What does the discussion claim is the real skill gap between junior and senior engineers?

Why does the argument say delegation and orchestration matter more as software scales?

What does AI not solve well in terms of ownership and debugging?

Review Questions

- What specific prompt and verification behaviors distinguish senior engineers’ higher acceptance of agent output?

- In the “vibe coding” framing, what criteria determine whether it’s acceptable versus risky?

- How do clarity, delegation, and orchestration change the definition of engineering compared with pure programming output?

Key Points

- 1

Senior engineers accept more AI-generated code because they write clearer specs, decompose tasks well, and apply stronger correctness checks during review.

- 2

“Vibe coding” without reading code is treated as situational; it may be acceptable for non-critical experiments but not for production-grade changes.

- 3

Linus Torvalds’s Python visualizer example is used to show how experienced developers can choose AI when learning costs outweigh benefits.

- 4

Anti-Res’s Reddus refactor demonstrates production-style AI use when changes include explicit testing and independent review.

- 5

Promotion and leveling are framed less as “better typing” and more as improved clarity plus delegation/orchestration.

- 6

As software scales, individual coding capability hits limits; coordinating parallel work becomes the differentiator.

- 7

AI can accelerate implementation, but it doesn’t replace human ownership—especially for understanding why bugs happen and fixing them over time.