The Greatest Software Engineers of All Time

Based on The PrimeTime's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Babbage’s analytical engine is traced to automating the validation of enormous mathematical tables, turning repeated human arithmetic into a mechanized workflow.

Briefing

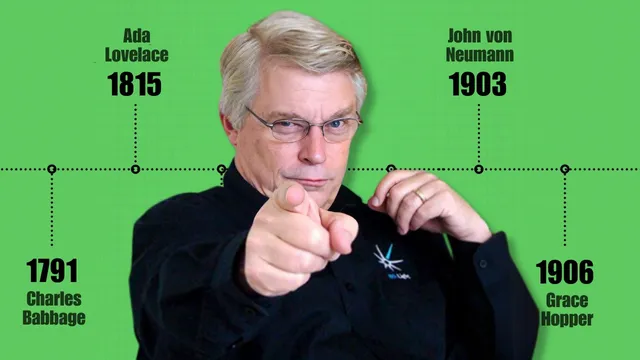

The central through-line is that modern computing didn’t emerge from a single breakthrough—it grew out of repeated, practical attempts to mechanize human calculation under real constraints, then evolved as those machines forced new ideas about memory, instruction, and programming itself. The stories of Babbage, Ada Lovelace, von Neumann, Turing, and Grace Hopper connect the dots between mechanical adders and today’s software culture, showing how “programming” became a discipline only after people had to make machines reliable, repeatable, and understandable.

Uncle Bob frames Charles Babbage as the most overlooked starting point: a brilliant Victorian engineer who built half-finished machines and spent years chasing a problem that was painfully mundane—validating massive tables of 60-digit numbers used for mathematical functions like Taylor series expansions. The work depended on armies of human “computers” (often displaced hairdressers after the French Revolution) who performed thousands of additions by hand. Babbage’s answer was to mechanize the additions with a machine that could store results and reduce the work to repeated arithmetic steps. Even when the grand projects stalled, the ambition mattered: it pushed the idea that physical force could drive processes previously reserved for “thinking,” a theme that later reappears in the era of large language models.

Ada Lovelace enters as the figure who helped shift the conversation from arithmetic to symbolism. The transcript emphasizes that Lovelace and Babbage worked closely—letters, shared ideas, and “pair programming” before the term existed. Lovelace is portrayed as the person who saw how an analytical engine could follow instructions that move data between memory and processing, enabling more than number-crunching. The discussion also pushes back on the common tendency to credit Lovelace alone: Babbage had earlier ideas about symbolic computation (including game-playing concepts), while Lovelace’s insight and enthusiasm accelerated the symbolic framing.

John von Neumann’s arc shows how wartime computation forced architecture-level thinking. Ballistics and explosive calculations demanded iteration at a scale humans couldn’t sustain, leading to card-machine workflows and eventually electronic machines. The key insight attributed to von Neumann is the need for fast access to both instructions and data—placing program memory and data memory in the same high-speed memory system—forming the basis of the von Neumann architecture still used broadly today. The transcript also underlines the physical reality of early computing: debugging could mean working in extreme heat, with hardware whose memory reliability required elaborate error-correction schemes.

Alan Turing then reframes computing as a question of what can be decided by an algorithm. By inventing the abstract “Turing machine” model, Turing helps establish that some problems are not decidable—an outcome that grew out of the pursuit of Hilbert’s famous questions. The discussion ties Turing’s later codebreaking work back to the practical machine-building tradition.

Finally, Grace Hopper is presented as a foundational “software engineer” in practice and in language design. She’s credited with helping create early programming discipline: writing manuals, inventing terminology (including terms like “debug”), and shaping how people wrote and reused code via subroutines. Her work spans electromechanical systems and later UNIVAC-era machines, where she pushed for better ways to express instructions—culminating in COBOL, which she championed as English-like for business adoption. The transcript treats COBOL as a mixed outcome: conceptually aligned with Hopper’s goal of accessibility, but technically inefficient and wordy, yet still enduring.

In the Q&A, the conversation pivots to the future: AI may improve coding assistance and reduce drudgery, but human creativity and the hard parts of specifying what users truly want remain out of reach for now. The advice to aspiring programmers is to keep learning broadly, experiment, and build roots in fundamentals—because the “web” and other dominant platforms will eventually fade, and the next wave will reward adaptable engineers rather than specialists trapped in one stack.

Cornell Notes

Computing history in this discussion is presented as a chain of practical problems turning into new concepts: mechanizing hand calculation, then formalizing what machines can do, and finally building programming as a discipline. Charles Babbage’s unfinished analytical engine is traced to the need to automate validation of huge mathematical tables, while Ada Lovelace helps shift the focus from arithmetic to symbolic instruction-following. John von Neumann’s wartime work leads to the core architectural idea that fast instruction access and fast data access should share the same memory. Alan Turing’s abstract machine model establishes limits on what algorithms can decide, and Grace Hopper’s programming manuals, terminology, and COBOL push software toward repeatable engineering practices. The stakes are enduring: these breakthroughs explain why modern software works—and where its limits still are.

Why does Charles Babbage’s story start with “tables” and human labor rather than with computers as we imagine them?

What is the “symbolic” leap associated with Ada Lovelace, and how is it connected to Babbage?

What architectural insight is attributed to von Neumann, and why did wartime computation make it urgent?

How does Turing’s work change the conversation from building machines to proving limits?

Why is Grace Hopper portrayed as a “software engineer,” and what did she build beyond code?

What future-facing claim is made about AI and programming?

Review Questions

- Which specific practical calculation bottleneck is used to motivate Babbage’s mechanization effort, and what kind of machine behavior was intended to replace human work?

- How do the transcript’s descriptions of von Neumann architecture and Turing machines differ—one is about machine speed and memory layout, the other about mathematical limits?

- What programming practices and language decisions does Hopper’s story emphasize, and how do those choices relate to the business goal behind COBOL?

Key Points

- 1

Babbage’s analytical engine is traced to automating the validation of enormous mathematical tables, turning repeated human arithmetic into a mechanized workflow.

- 2

Ada Lovelace’s lasting impact is presented as a shift from arithmetic to symbolic instruction-following, built through close collaboration with Babbage.

- 3

Von Neumann’s wartime work elevates architecture: fast access to both instructions and data in shared memory becomes essential for scalable computation.

- 4

Turing’s abstract machine model reframes computing as a theory of what can be decided algorithmically, not just what can be built.

- 5

Grace Hopper is credited with shaping programming as an engineering discipline—manuals, terminology, subroutines, and early language design—culminating in COBOL’s business-oriented approach.

- 6

The Q&A predicts AI will improve coding assistance and checking, but human creativity and the hardest parts of requirements remain central for the near future.

- 7

Career advice emphasizes breadth and fundamentals: experiment, learn multiple paradigms/languages, and be ready for platform shifts rather than betting everything on one stack.